您现在的位置是:首页 >学无止境 >P5:运动鞋识别网站首页学无止境

P5:运动鞋识别

简介P5:运动鞋识别

🍨 本文为🔗365天深度学习训练营 中的学习记录博客

🍦 参考文章:Pytorch实战 | 第P5周:运动鞋识别

🍖 原作者:K同学啊|接辅导、项目定制

Z、心得体会:

- 本节的重点是:如何使用动态学习率

- 可以定义设置动态学习率函数,加载到optimizer里面

- 官方动态学习率接口如下:

(1) lambda1 = lambda epoch: (0.92 ** (epoch // 20)) #第二组参数的调整方法

(2)optimizer = torch.optim.SGD(model.parameters(), lr = learn_rate)

(3)scheduler = torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda=lambda1) #optimizer是之前定义好的优化器名称;选定调整方法

PS: 最后使用scheduler.step()调用官方动态学习率

一、前期准备

1. 设置GPU

import torch

import torch.nn as nn

import torchvision.transforms as transforms

import torchvision

from torchvision import transforms, datasets

import os,PIL,pathlib

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

device

device(type='cuda')

2. 导入数据

import os, PIL, random, pathlib

data_dir = 'C:/Users/Dell/Desktop/ML-K/P5/data/'

data_dir = pathlib.Path(data_dir)

data_paths = list(data_dir.glob('*'))

classeNames = [str(path).split('\')[-1] for path in data_paths]

classeNames

['test', 'train']

train_transforms = transforms.Compose([

transforms.Resize([224, 224]), #将图片resize成统一尺寸

transforms.ToTensor(), #将PIL Image或numpy.ndarray转换成tensor,并归一化到[0, 1]之间

transforms.Normalize( #标准化处理,使模型更容易收敛

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]) #其中mean和std是从数据集中随机抽样计算得到的

])

test_transforms = transforms.Compose([

transforms.Resize([224, 224]), #将图片resize成统一尺寸

transforms.ToTensor(), #将PIL Image或numpy.ndarray转换成tensor,并归一化到[0, 1]之间

transforms.Normalize( #标准化处理,使模型更容易收敛

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]) #其中mean和std是从数据集中随机抽样计算得到的

])

train_dataset = datasets.ImageFolder('C:/Users/Dell/Desktop/ML-K/P5/data/train/', transform = train_transforms)

test_dataset = datasets.ImageFolder('C:/Users/Dell/Desktop/ML-K/P5/data/test/', transform = train_transforms)

train_dataset.class_to_idx

{'adidas': 0, 'nike': 1}

batch_size = 32

train_dl = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size,

shuffle=True,

num_workers=1)

test_dl = torch.utils.data.DataLoader(test_dataset,

batch_size=batch_size,

shuffle=True,

num_workers=1)

for X, y in test_dl:

print('shape of X [N, C, H, W]: ', X.shape)

print('shape of y: ', y.shape, y.dtype)

break

shape of X [N, C, H, W]: torch.Size([32, 3, 224, 224])

shape of y: torch.Size([32]) torch.int64

二、构建简单的CNN网络

import torch.nn.functional as F

class Model(nn.Module):

def __init__(self):

super(Model, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(3, 12, kernel_size=5, padding = 0),

nn.BatchNorm2d(12),

nn.ReLU())

self.conv2 = nn.Sequential(

nn.Conv2d(12, 12, kernel_size=5, padding = 0),

nn.BatchNorm2d(12),

nn.ReLU())

self.pool3 = nn.Sequential(

nn.MaxPool2d(2))

self.conv4 = nn.Sequential(

nn.Conv2d(12, 24, kernel_size=5, padding = 0),

nn.BatchNorm2d(24),

nn.ReLU())

self.conv5 = nn.Sequential(

nn.Conv2d(24, 24, kernel_size=5, padding = 0),

nn.BatchNorm2d(24),

nn.ReLU())

self.pool6 = nn.Sequential(

nn.MaxPool2d(2))

self.dropout = nn.Sequential(

nn.Dropout(0.2))

self.fc = nn.Sequential(

nn.Linear(24*50*50, len(classeNames)))

def forward(self, x):

batch_size = x.size(0)

x = self.conv1(x)

x = self.conv2(x)

x = self.pool3(x)

x = self.conv4(x)

x = self.conv5(x)

x = self.pool6(x)

x = self.dropout(x)

x = x.view(batch_size, -1) # flatten变成全连接网络需要的输入(batch, 24*50*50) 变为(batch, -1), -1此处自动计算出的是24*50*50

x = self.fc(x)

return x

device = 'cuda' if torch.cuda.is_available() else 'cpu'

print('Using {} device'.format(device))

model = Model().to(device)

model

Using cuda device

Model(

(conv1): Sequential(

(0): Conv2d(3, 12, kernel_size=(5, 5), stride=(1, 1))

(1): BatchNorm2d(12, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU()

)

(conv2): Sequential(

(0): Conv2d(12, 12, kernel_size=(5, 5), stride=(1, 1))

(1): BatchNorm2d(12, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU()

)

(pool3): Sequential(

(0): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(conv4): Sequential(

(0): Conv2d(12, 24, kernel_size=(5, 5), stride=(1, 1))

(1): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU()

)

(conv5): Sequential(

(0): Conv2d(24, 24, kernel_size=(5, 5), stride=(1, 1))

(1): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU()

)

(pool6): Sequential(

(0): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(dropout): Sequential(

(0): Dropout(p=0.2, inplace=False)

)

(fc): Sequential(

(0): Linear(in_features=60000, out_features=2, bias=True)

)

)

三、训练模型

1. 编写训练函数

def train(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset)

num_batches = len(dataloader)

train_loss, train_acc = 0, 0

for X, y in dataloader:

X, y = X.to(device), y.to(device)

pred = model(X)

loss = loss_fn(pred, y)

#反向传播

optimizer.zero_grad()

loss.backward()

optimizer.step()

train_acc += (pred.argmax(1) == y).type(torch.float).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= num_batches

return train_acc, train_loss

2. 编写测试函数

def test(dataloader, model, loss_fn):

size = len(dataloader.dataset)

num_batches = len(dataloader)

test_loss, test_acc = 0, 0

#当不进行训练时,停止梯度更新,节省计算内存消耗

with torch.no_grad():

for imgs, target in dataloader:

imgs, target = imgs.to(device), target.to(device)

target_pred = model(imgs)

loss = loss_fn(target_pred, target)

test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()

test_loss += loss.item()

test_acc /= size

test_loss /= num_batches

return test_acc, test_loss

3. 设置动态学习率

#设置动态学习率

def adjust_learning_rate(optimizer, epoch, start_lr):

#每两个epoch衰减到原来的0.98

lr = start_lr * (0.98 ** (epoch // 2)) # //的意思是相除后向下取整

for param_group in optimizer.param_groups:

param_group['lr'] = lr

learn_rate = 1e-4

optimizer = torch.optim.SGD(model.parameters(), lr = learn_rate)

#官方的动态学习率接口使用:

lambda1 = lambda epoch: (0.92 ** (epoch // 20))

optimizer = torch.optim.SGD(model.parameters(), lr = learn_rate)

scheduler = torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda=lambda1)

4. 正式训练

loss_fn = nn.CrossEntropyLoss() #创建损失函数

epochs = 40

train_loss = []

train_acc = []

test_loss = []

test_acc = []

for epoch in range(epochs):

#更新学习率

adjust_learning_rate(optimizer, epoch, learn_rate)

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, optimizer)

# scheduler.step() #更新学习率(调用官方动态学习率接口时使用)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

#获取当前的学习率

lr = optimizer.state_dict()['param_groups'][0]['lr']

template = ('Epoch: {:2d}. Train_acc: {:.1f}%, Train_loss: {:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f}, Lr: {:.2E}')

print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss, epoch_test_acc*100, epoch_test_loss, lr))

print('Done')

loss_fn = nn.CrossEntropyLoss() #创建损失函数

epochs = 40

train_loss = []

train_acc = []

test_loss = []

test_acc = []

for epoch in range(epochs):

#更新学习率

adjust_learning_rate(optimizer, epoch, learn_rate)

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, optimizer)

# scheduler.step() #更新学习率(调用官方动态学习率接口时使用)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

#获取当前的学习率

lr = optimizer.state_dict()['param_groups'][0]['lr']

template = ('Epoch: {:2d}. Train_acc: {:.1f}%, Train_loss: {:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f}, Lr: {:.2E}')

print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss, epoch_test_acc*100, epoch_test_loss, lr))

print('Done')

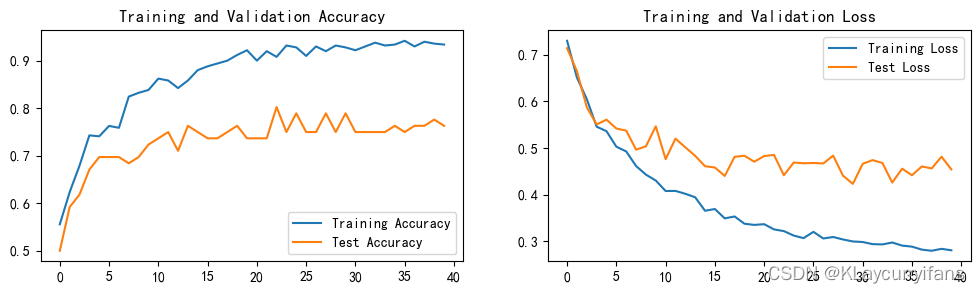

四、结果可视化

1. Loss和Accuracy图

import matplotlib.pyplot as plt

import warnings

warnings.filterwarnings('ignore')

plt.rcParams['font.sans-serif'] = ['SimHei'] #用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False #用来正常显示负号

plt.rcParams['figure.dpi'] = 100

epochs_range = range(epochs)

plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

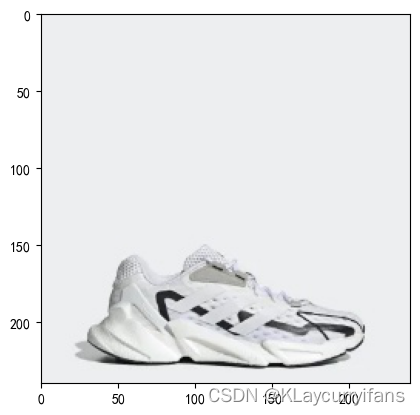

2. 指定图片进行预测

from PIL import Image

classes = list(train_dataset.class_to_idx)

def predict_one_image(image_path, model, transform, classes):

test_img = Image.open(image_path).convert('RGB')

plt.imshow(test_img) #展示图片

test_img = transform(test_img)

img = test_img.to(device).unsqueeze(0)

model.eval()

output = model(img)

_,pred = torch.max(output, 1)

pred_class = classes[pred]

print(f'预测结果是:{pred_class}')

predict_one_image(image_path='C:/Users/Dell/Desktop/ML-K/P5/data/test/adidas/1.jpg',

model = model,

transform = train_transforms,

classes = classes)

预测结果是:adidas

五、保存并加载模型

PATH = './model.pth' #保存的参数文件名

torch.save(model.state_dict(), PATH)

model.load_state_dict(torch.load(PATH, map_location=device))

<All keys matched successfully>

风语者!平时喜欢研究各种技术,目前在从事后端开发工作,热爱生活、热爱工作。

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。...

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。... U8W/U8W-Mini使用与常见问题解决

U8W/U8W-Mini使用与常见问题解决 stm32使用HAL库配置串口中断收发数据(保姆级教程)

stm32使用HAL库配置串口中断收发数据(保姆级教程) 分享几个国内免费的ChatGPT镜像网址(亲测有效)

分享几个国内免费的ChatGPT镜像网址(亲测有效) Allegro16.6差分等长设置及走线总结

Allegro16.6差分等长设置及走线总结