您现在的位置是:首页 >技术教程 >Life of a Packet in Kubernetes - Calico网络进阶(注解版)网站首页技术教程

Life of a Packet in Kubernetes - Calico网络进阶(注解版)

目录

As we discussed in Part 1, CNI plugins play an essential role in Kubernetes networking. There are many third-party CNI plugins available today; Calico is one of them. Many engineers prefer Calico; one of the main reasons is its ease of use and how it shapes the network fabric.

Calico supports a broad range of platforms, including Kubernetes, OpenShift, Docker EE, OpenStack, and bare metal services. The Calico node runs in a Docker container on the Kubernetes master node and on each Kubernetes worker node in the cluster. The calico-cni plugin integrates directly with the Kubernetes kubelet process on each node to discover which Pods are created and add them to Calico networking.

We will talk about installation, Calico modules (Felix, BIRD, and Confd), and routing modes.

What is not covered? Network policy — It needs a separate article, therefore skipping that for now.

Topics — Part 2

- Requirements

- Modules and its functions

- Routing modes

- Installation (calico and calicoctl)

CNI Requirements

- Create veth-pair and move the same inside container

- Identify the right POD CIDR

- Create a CNI configuration file

- Assign and manage IP address

- Add default routes inside the container

- Advertise the routes to all the peer nodes (Not applicable for VxLan)

- Add routes in the HOST server

- Enforce Network Policy

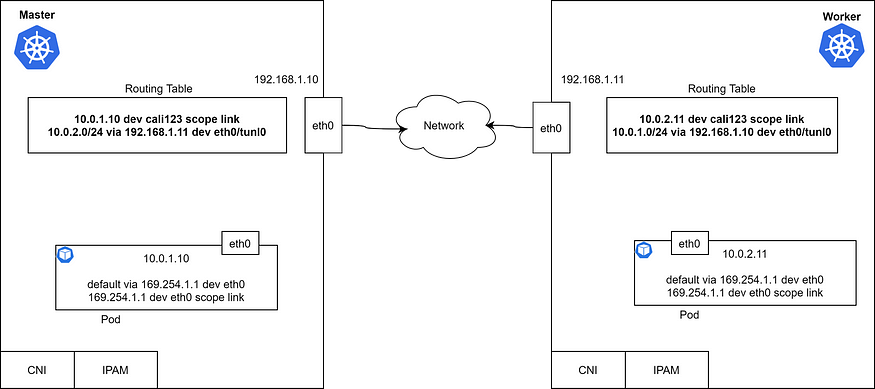

There are many other requirements too, but the above ones are the basic. Let’s take a look at the routing table in the Master and Worker node. Each node has a container with an IP address and default container route.

Basic Kubernetes network requirement

By seeing the routing table, it is evident(明显的) that the Pods can talk to each other via the L3 network as the routes are perfect. What module is responsible for adding this route, and how it gets to know the remote routes? Also, why there is a default route with gateway 169.254.1.1? We will talk about that in a moment.

- What module is responsible for adding this route

是哪个组件添加这条路由?

- how it gets to know the remote routes?

如何获取远程主机的路由?

- why there is a default route with gateway 169.254.1.1?

pod的默认gateway是169.254.1.1

calico要解决的就是几个问题.

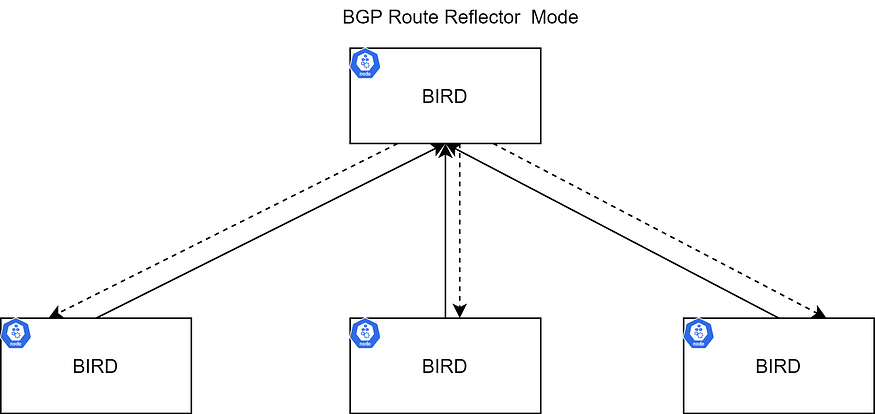

The core components of Calico are Bird, Felix, ConfD, Etcd, and Kubernetes API Server. The data-store is used to store the config information(ip-pools, endpoints info, network policies, etc.). In our example, we will use Kubernetes as a Calico data store.

BIRD (BGP)

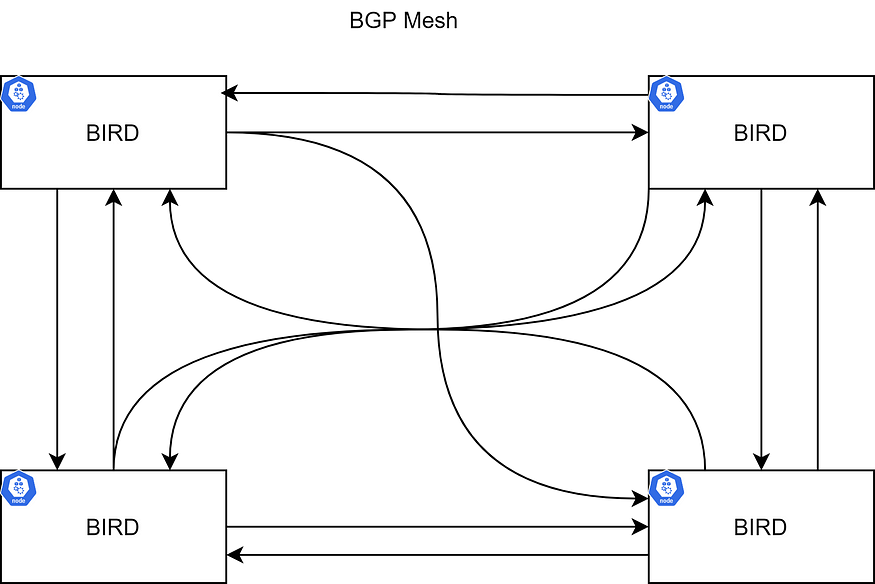

The bird is a per-node BGP daemon that exchanges route information with BGP daemons running on other nodes. The common topology could be node-to-node mesh, where each BGP peers with every other.

For large scale deployments, this can get messy. There are Route Reflectors for completing the route propagation (Certain BGP nodes can be configured as Route Reflectors) to reduce the number of BGP-BGP connections. Rather than each BGP system having to peer with every other BGP system with the AS, each BGP speaker instead peers with a router reflector. Routing advertisements sent to the route reflector are then reflected out to all of the other BGP speakers. For more information, please refer to the RFC4456.

The BIRD instance is responsible for propagating the routes to other BIRD instances. The default configuration is ‘BGP Mesh,’ and this can be used for small deployments. In large-scale deployments, it is recommended to use a Route reflector to avoid issues. There can be more than one RR to have high availability. Also, external rack RRs can be used instead of BIRD.

ConfD

ConfD is a simple configuration management tool that runs in the Calico node container. It reads values (BIRD configuration for Calico) from etcd, and writes them to disk files. It loops through pools (networks and subnetworks) to apply configuration data (CIDR keys), and assembles them in a way that BIRD can use. So whenever there is a change in the network, BIRD can detect and propagate routes to other nodes.

Felix

The Calico Felix daemon runs in the Calico node container and brings the solution together by taking several actions:

- Reads information from the Kubernetes etcd

- Builds the routing table

- Configures the IPTables (kube-proxy mode IPTables)

- Configures IPVS (kube-proxy mode IPVS)

Let’s look at the cluster with all Calico modules,

Deployment with ‘NoSchedule’ Toleration

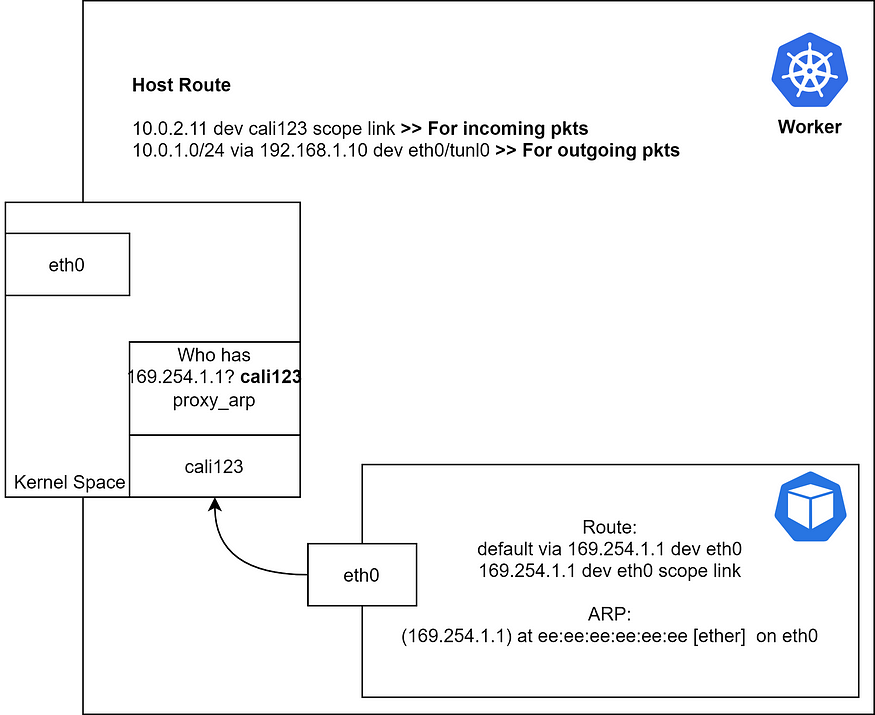

Something looks different? Yes, the one end of the veth is dangling(悬空的), not connected anywhere; It is in kernel space. veth一端悬空,它位于内核空间不连接到任何地方.

How the packet gets routed to the peer node?

- Pod in master tries to ping the IP address 10.0.2.11

- Pod sends an ARP request to the gateway.

- Get’s the ARP response with the MAC address.

- Wait, who sent the ARP response?

What’s going on? How can a container route at an IP that doesn't exist?

Let’s walk through what’s happening. Some of you reading this might have noticed that 169.254.1.1 is an IPv4 link-local address.

The container has a default route pointing at a link-local address. The container expects this IP address to be reachable on its directly connected interface, in this case, the containers eth0 address. The container will attempt to ARP for that IP address when it wants to route out through the default route.

If we capture the ARP response, it will show the MAC address of the other end of the veth (cali123 这个mac是全e). So you might be wondering how on earth the host is replying to an ARP request for which it doesn’t have an IP interface.(主机究竟是如何响应它没有IP接口的ARP请求的?)

The answer is proxy-arp. If we check the host side VETH interface, we’ll see that proxy-arp is enabled.

master $ cat /proc/sys/net/ipv4/conf/cali123/proxy_arp 1

“Proxy ARP is a technique by which a proxy device on a given network answers the ARP queries for an IP address that is not on that network. The proxy is aware of the location of the traffic’s destination, and offers its own MAC address as the (ostensibly final) destination.[1] The traffic directed to the proxy address is then typically routed by the proxy to the intended destination via another interface or via a tunnel. The process, which results in the node responding with its own MAC address to an ARP request for a different IP address for proxying purposes, is sometimes referred to as publishing”

Let’s take a closer look(细看) at the worker node,

Once the packet reaches the kernel, it routes the packet based on routing table entries.

Incoming traffic

- The packet reaches the worker node kernel.

- Kernel puts the packet into the cali123.

Routing Modes

Calico supports 3 routing modes; in this section, we will see the pros and cons of each method and where we can use them.

- IP-in-IP: default; encapsulated

- Direct/NoEncapMode: unencapsulated (Preferred)

- VXLAN: encapsulated (No BGP)

IP-in-IP (Default)

IP-in-IP is a simple form of encapsulation achieved by putting an IP packet inside another. A transmitted packet contains an outer header with host source and destination IPs and an inner header with pod source and destination IPs.

inner header: source Pod ip -> target pod IP

outer header: source node IP of source Pod -> target node IP of target Pod

Azure doesn’t support IP-IP (As far I know); therefore, we can’t use IP-IP in that environment. It’s better to disable IP-IP to get better performance.

NoEncapMode

In this mode, send packets as if they came directly from the pod. Since there is no encapsulation and de-capsulation overhead, direct is highly performant.

Source IP check must be disabled in AWS to use this mode.

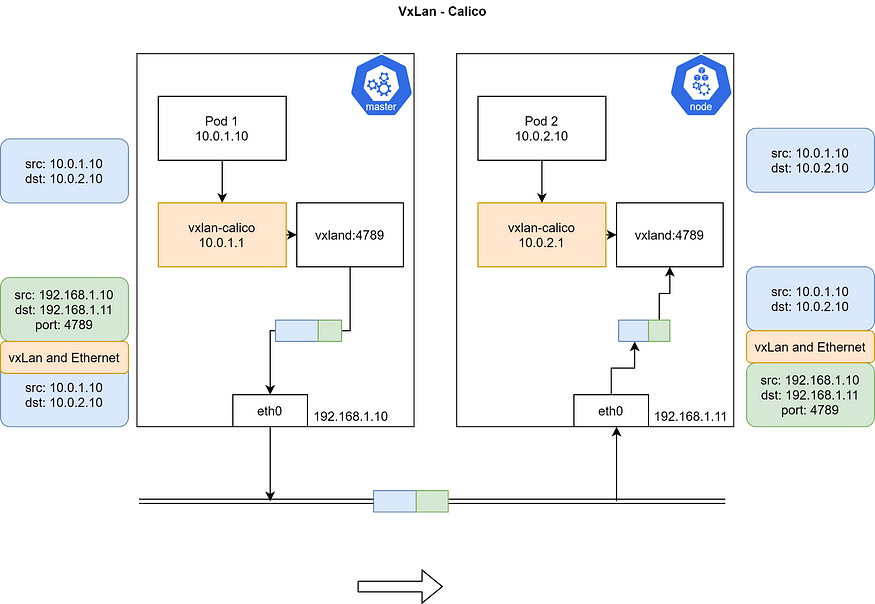

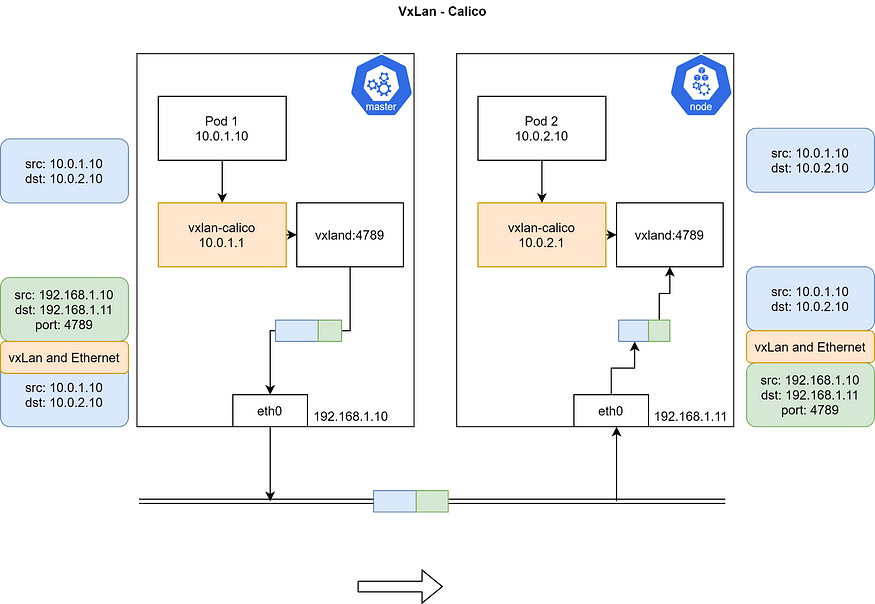

VXLAN

VXLAN routing is supported in Calico 3.7+.

VXLAN stands for Virtual Extensible LAN. VXLAN is an encapsulation technique in which layer 2 ethernet frames are encapsulated in UDP packets(二层UDP封包模式,和flanel类似). VXLAN is a network virtualization technology. When devices communicate within a software-defined Datacenter, a VXLAN tunnel is set up between those devices. Those tunnels can be set up on both physical and virtual switches. The switch ports are known as VXLAN Tunnel Endpoints (VTEPs) and are responsible for the encapsulation and de-encapsulation of VXLAN packets(VTEPs 就是一个负责解封包). Devices without VXLAN support are connected to a switch with VTEP functionality. The switch will provide the conversion from and to VXLAN.

VXLAN is great for networks that do not support IP-in-IP, such as Azure or any other DC that doesn’t support BGP.

Demo — IPIP and UnEncapMode

Check the cluster state before the Calico installation.

master $ kubectl get nodes NAME STATUS ROLES AGE VERSION controlplane NotReady master 40s v1.18.0 node01 NotReady <none> 9s v1.18.0 master $ kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system coredns-66bff467f8-52tkd 0/1 Pending 0 32s kube-system coredns-66bff467f8-g5gjb 0/1 Pending 0 32s kube-system etcd-controlplane 1/1 Running 0 34s kube-system kube-apiserver-controlplane 1/1 Running 0 34s kube-system kube-controller-manager-controlplane 1/1 Running 0 34s kube-system kube-proxy-b2j4x 1/1 Running 0 13s kube-system kube-proxy-s46lv 1/1 Running 0 32s kube-system kube-scheduler-controlplane 1/1 Running 0 33s

Check the CNI bin and conf directory. There won’t be any configuration file or the calico binary as the calico installation would populate these via volume mount.

master $ cd /etc/cni -bash: cd: /etc/cni: No such file or directorymaster $ cd /opt/cni/bin master $ ls bridge dhcp flannel host-device host-local ipvlan loopback macvlan portmap ptp sample tuning vlan

Check the IP routes in the master/worker node.

master $ ip route default via 172.17.0.1 dev ens3 172.17.0.0/16 dev ens3 proto kernel scope link src 172.17.0.32 172.18.0.0/24 dev docker0 proto kernel scope link src 172.18.0.1 linkdown curl https://docs.projectcalico.org/manifests/calico.yaml -O

Download and apply the calico.yaml based on your environment.

curl https://docs.projectcalico.org/manifests/calico.yaml -O kubectl apply -f calico.yaml

Let’s take a look at some useful configuration parameters,

cni_network_config: |-

{

"name": "k8s-pod-network",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "calico", >>> Calico's CNI plugin

"log_level": "info",

"log_file_path": "/var/log/calico/cni/cni.log",

"datastore_type": "kubernetes",

"nodename": "__KUBERNETES_NODE_NAME__",

"mtu": __CNI_MTU__,

"ipam": {

"type": "calico-ipam" >>> Calico's IPAM instaed of default IPAM

},

"policy": {

"type": "k8s"

},

"kubernetes": {

"kubeconfig": "__KUBECONFIG_FILEPATH__"

}

},

{

"type": "portmap",

"snat": true,

"capabilities": {"portMappings": true}

},

{

"type": "bandwidth",

"capabilities": {"bandwidth": true}

}

]

}# Enable IPIP

- name: CALICO_IPV4POOL_IPIP

value: "Always" >> Set this to 'Never' to disable IP-IP

# Enable or Disable VXLAN on the default IP pool.

- name: CALICO_IPV4POOL_VXLAN

value: "Never"Check POD and Node status after the calico installation.

master $ kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system calico-kube-controllers-799fb94867-6qj77 0/1 ContainerCreating 0 21s kube-system calico-node-bzttq 0/1 PodInitializing 0 21s kube-system calico-node-r6bwj 0/1 PodInitializing 0 21s kube-system coredns-66bff467f8-52tkd 0/1 Pending 0 7m5s kube-system coredns-66bff467f8-g5gjb 0/1 ContainerCreating 0 7m5s kube-system etcd-controlplane 1/1 Running 0 7m7s kube-system kube-apiserver-controlplane 1/1 Running 0 7m7s kube-system kube-controller-manager-controlplane 1/1 Running 0 7m7s kube-system kube-proxy-b2j4x 1/1 Running 0 6m46s kube-system kube-proxy-s46lv 1/1 Running 0 7m5s kube-system kube-scheduler-controlplane 1/1 Running 0 7m6smaster $ kubectl get nodes NAME STATUS ROLES AGE VERSION controlplane Ready master 7m30s v1.18.0 node01 Ready <none> 6m59s v1.18.0

Explore the CNI configuration as that’s what Kubelet needs to set up the network.

master $ cd /etc/cni/net.d/

master $ ls

10-calico.conflist calico-kubeconfig

master $

master $

master $ cat 10-calico.conflist

{

"name": "k8s-pod-network",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "calico",

"log_level": "info",

"log_file_path": "/var/log/calico/cni/cni.log",

"datastore_type": "kubernetes",

"nodename": "controlplane",

"mtu": 1440,

"ipam": {

"type": "calico-ipam"

},

"policy": {

"type": "k8s"

},

"kubernetes": {

"kubeconfig": "/etc/cni/net.d/calico-kubeconfig"

}

},

{

"type": "portmap",

"snat": true,

"capabilities": {"portMappings": true}

},

{

"type": "bandwidth",

"capabilities": {"bandwidth": true}

}

]

}

Check the CNI binary files,

master $ ls bandwidth bridge calico calico-ipam dhcp flannel host-device host-local install ipvlan loopback macvlan portmap ptp sample tuning vlan master $

Let’s install the calicoctl to give good information about the calico and let us modify the Calico configuration.

master $ cd /usr/local/bin/ master $ curl -O -L https://github.com/projectcalico/calicoctl/releases/download/v3.16.3/calicoctl % Total % Received % Xferd Average Speed Time Time Time Current Dload Upload Total Spent Left Speed 100 633 100 633 0 0 3087 0 --:--:-- --:--:-- --:--:-- 3087 100 38.4M 100 38.4M 0 0 5072k 0 0:00:07 0:00:07 --:--:-- 4325k master $ chmod +x calicoctl master $ export DATASTORE_TYPE=kubernetes master $ export KUBECONFIG=~/.kube/config# Check endpoints - it will be empty as we have't deployed any POD master $ calicoctl get workloadendpoints WORKLOAD NODE NETWORKS INTERFACEmaster $

Check BGP peer status. This will show the ‘worker’ node as a peer.

master $ calicoctl node status Calico process is running.IPv4 BGP status +--------------+-------------------+-------+----------+-------------+ | PEER ADDRESS | PEER TYPE | STATE | SINCE | INFO | +--------------+-------------------+-------+----------+-------------+ | 172.17.0.40 | node-to-node mesh | up | 00:24:04 | Established | +--------------+-------------------+-------+----------+-------------+

Create a busybox POD with two replicas and master node toleration.

cat > busybox.yaml <<"EOF"

apiVersion: apps/v1

kind: Deployment

metadata:

name: busybox-deployment

spec:

selector:

matchLabels:

app: busybox

replicas: 2

template:

metadata:

labels:

app: busybox

spec:

tolerations:

- key: "node-role.kubernetes.io/master"

operator: "Exists"

effect: "NoSchedule"

containers:

- name: busybox

image: busybox

command: ["sleep"]

args: ["10000"]

EOFmaster $ kubectl apply -f busybox.yaml

deployment.apps/busybox-deployment created

Get Pod and endpoint status,

master $ kubectl get pods -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES busybox-deployment-8c7dc8548-btnkv 1/1 Running 0 6s 192.168.196.131 node01 <none> <none> busybox-deployment-8c7dc8548-x6ljh 1/1 Running 0 6s 192.168.49.66 controlplane <none> <none>master $ calicoctl get workloadendpoints WORKLOAD NODE NETWORKS INTERFACE busybox-deployment-8c7dc8548-btnkv node01 192.168.196.131/32 calib673e730d42 busybox-deployment-8c7dc8548-x6ljh controlplane 192.168.49.66/32 cali9861acf9f07

Get the details of the host side veth peer of master node busybox POD.

master $ ifconfig cali9861acf9f07

cali9861acf9f07: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1440

inet6 fe80::ecee:eeff:feee:eeee prefixlen 64 scopeid 0x20<link>

ether ee:ee:ee:ee:ee:ee txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 5 bytes 446 (446.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

Get the details of the master Pod’s interface,

master $ kubectl exec busybox-deployment-8c7dc8548-x6ljh -- ifconfig

eth0 Link encap:Ethernet HWaddr 92:7E:C4:15:B9:82

inet addr:192.168.49.66 Bcast:192.168.49.66 Mask:255.255.255.255

UP BROADCAST RUNNING MULTICAST MTU:1440 Metric:1

RX packets:5 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:446 (446.0 B) TX bytes:0 (0.0 B)lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)master $ kubectl exec busybox-deployment-8c7dc8548-x6ljh -- ip route

default via 169.254.1.1 dev eth0

169.254.1.1 dev eth0 scope link

master $ kubectl exec busybox-deployment-8c7dc8548-x6ljh -- arp

master $

Get the master node routes,

master $ ip route default via 172.17.0.1 dev ens3 172.17.0.0/16 dev ens3 proto kernel scope link src 172.17.0.32 172.18.0.0/24 dev docker0 proto kernel scope link src 172.18.0.1 linkdown blackhole 192.168.49.64/26 proto bird 192.168.49.65 dev calic22dbe57533 scope link 192.168.49.66 dev cali9861acf9f07 scope link 192.168.196.128/26 via 172.17.0.40 dev tunl0 proto bird onlink

Let’s try to ping the worker node Pod to trigger ARP.

master $ kubectl exec busybox-deployment-8c7dc8548-x6ljh -- ping 192.168.196.131 -c 1 PING 192.168.196.131 (192.168.196.131): 56 data bytes 64 bytes from 192.168.196.131: seq=0 ttl=62 time=0.823 msmaster $ kubectl exec busybox-deployment-8c7dc8548-x6ljh -- arp ? (169.254.1.1) at ee:ee:ee:ee:ee:ee [ether] on eth0

The MAC address of the gateway is nothing but the cali9861acf9f07. From now, whenever the traffic goes out, it will directly hit the kernel; And, the kernel knows that it has to write the packet into the tunl0 based on the IP route.

Proxy ARP configuration,

master $ cat /proc/sys/net/ipv4/conf/cali9861acf9f07/proxy_arp 1

How the destination node handles the packet?

node01 $ ip route default via 172.17.0.1 dev ens3 172.17.0.0/16 dev ens3 proto kernel scope link src 172.17.0.40 172.18.0.0/24 dev docker0 proto kernel scope link src 172.18.0.1 linkdown 192.168.49.64/26 via 172.17.0.32 dev tunl0 proto bird onlink blackhole 192.168.196.128/26 proto bird 192.168.196.129 dev calid4f00d97cb5 scope link 192.168.196.130 dev cali257578b48b6 scope link 192.168.196.131 dev calib673e730d42 scope link

Upon receiving the packet, the kernel sends the right veth based on the routing table.

We can see the IP-IP protocol on the wire if we capture the packets. Azure doesn’t support IP-IP (As far I know); therefore, we can’t use IP-IP in that environment. It’s better to disable IP-IP to get better performance. Let’s try to disable and see what’s the effect.

Disable IP-IP

Update the ipPool configuration to disable IPIP.

master $ calicoctl get ippool default-ipv4-ippool -o yaml > ippool.yaml master $ vi ippool.yaml

Open the ippool.yaml and set the IPIP to ‘Never,’ and apply the yaml via calicoctl.

master $ calicoctl apply -f ippool.yaml Successfully applied 1 'IPPool' resource(s)

Recheck the IP route,

master $ ip route default via 172.17.0.1 dev ens3 172.17.0.0/16 dev ens3 proto kernel scope link src 172.17.0.32 172.18.0.0/24 dev docker0 proto kernel scope link src 172.18.0.1 linkdown blackhole 192.168.49.64/26 proto bird 192.168.49.65 dev calic22dbe57533 scope link 192.168.49.66 dev cali9861acf9f07 scope link 192.168.196.128/26 via 172.17.0.40 dev ens3 proto bird

The device is no more tunl0; it is set to the management interface of the master node.

Let’s ping the worker node POD and make sure all works fine. From now, there won’t be any IPIP protocol involved.

master $ kubectl exec busybox-deployment-8c7dc8548-x6ljh -- ping 192.168.196.131 -c 1 PING 192.168.196.131 (192.168.196.131): 56 data bytes 64 bytes from 192.168.196.131: seq=0 ttl=62 time=0.653 ms--- 192.168.196.131 ping statistics --- 1 packets transmitted, 1 packets received, 0% packet loss round-trip min/avg/max = 0.653/0.653/0.653 ms

Note: Source IP check should be disabled in AWS environment to use this mode.

Demo — VXLAN

Re-initiate the cluster and download the calico.yaml file to apply the following changes,

- Remove bird from livenessProbe and readinessProbe

livenessProbe:

exec:

command:

- /bin/calico-node

- -felix-live

- -bird-live >> Remove this

periodSeconds: 10

initialDelaySeconds: 10

failureThreshold: 6

readinessProbe:

exec:

command:

- /bin/calico-node

- -felix-ready

- -bird-ready >> Remove this

2. Change the calico_backend to ‘vxlan’ as we don’t need BGP anymore.

kind: ConfigMap apiVersion: v1 metadata: name: calico-config namespace: kube-system data: # Typha is disabled. typha_service_name: "none" # Configure the backend to use. calico_backend: "vxlan"

3. Disable IPIP

# Enable IPIP

- name: CALICO_IPV4POOL_IPIP

value: "Never" >> Set this to 'Never' to disable IP-IP

# Enable or Disable VXLAN on the default IP pool.

- name: CALICO_IPV4POOL_VXLAN

value: "Never"

Let’s apply this new yaml.

master $ ip route

default via 172.17.0.1 dev ens3

172.17.0.0/16 dev ens3 proto kernel scope link src 172.17.0.15

172.18.0.0/24 dev docker0 proto kernel scope link src 172.18.0.1 linkdown

192.168.49.65 dev calif5cc38277c7 scope link

192.168.49.66 dev cali840c047460a scope link

192.168.196.128/26 via 192.168.196.128 dev vxlan.calico onlinkvxlan.calico: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1440

inet 192.168.196.128 netmask 255.255.255.255 broadcast 192.168.196.128

inet6 fe80::64aa:99ff:fe2f:dc24 prefixlen 64 scopeid 0x20<link>

ether 66:aa:99:2f:dc:24 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 11 overruns 0 carrier 0 collisions 0

Get the POD status,

master $ kubectl get pods -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES busybox-deployment-8c7dc8548-8bxnw 1/1 Running 0 11s 192.168.49.67 controlplane <none> <none> busybox-deployment-8c7dc8548-kmxst 1/1 Running 0 11s 192.168.196.130 node01 <none> <none>

Ping the worker node POD from

master $ kubectl exec busybox-deployment-8c7dc8548-8bxnw -- ip route default via 169.254.1.1 dev eth0 169.254.1.1 dev eth0 scope link

Trigger the ARP request,

master $ kubectl exec busybox-deployment-8c7dc8548-8bxnw -- arp master $ kubectl exec busybox-deployment-8c7dc8548-8bxnw -- ping 8.8.8.8 PING 8.8.8.8 (8.8.8.8): 56 data bytes 64 bytes from 8.8.8.8: seq=0 ttl=116 time=3.786 ms ^C master $ kubectl exec busybox-deployment-8c7dc8548-8bxnw -- arp ? (169.254.1.1) at ee:ee:ee:ee:ee:ee [ether] on eth0 master $

The concept is as the previous modes, but the only difference is that the packet reaches the vxland, and it encapsulates the packet with node IP and its MAC in the inner header and sends it. Also, the UDP port of the vxlan proto will be 4789. The etcd helps here to get the details of available nodes and their supported IP range so that the vxlan-calico can build the packet.

Note: VxLAN mode needs more processing power than the previous modes.

Disclaimer

This article does not provide any technical advice or recommendation; if you feel so, it is my personal view, not the company I work for.

References

Calico Documentation | Calico Documentation Open Infrastructure Summit videos from past community events, featuring keynotes and sessions from the global network of developers, operators, and supporting organizations. Kubernetes IBM Documentation GitHub - flannel-io/flannel: flannel is a network fabric for containers, designed for Kubernetes

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。...

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。... U8W/U8W-Mini使用与常见问题解决

U8W/U8W-Mini使用与常见问题解决 stm32使用HAL库配置串口中断收发数据(保姆级教程)

stm32使用HAL库配置串口中断收发数据(保姆级教程) 分享几个国内免费的ChatGPT镜像网址(亲测有效)

分享几个国内免费的ChatGPT镜像网址(亲测有效) Allegro16.6差分等长设置及走线总结

Allegro16.6差分等长设置及走线总结