您现在的位置是:首页 >技术杂谈 >k8s如何使用ceph rbd块存储(静态供给、存储类动态供给)网站首页技术杂谈

k8s如何使用ceph rbd块存储(静态供给、存储类动态供给)

简介k8s如何使用ceph rbd块存储(静态供给、存储类动态供给)

目录

前言

环境:centos 7.9 k8s 1.22.17 ceph集群

安装ceph集群

首先得有ceph集群,ceph集群的安装详情参考https://blog.csdn.net/MssGuo/article/details/122280657,这里仅简要给出ceph的安装步骤:

注意:这里的安装ceph集群是使用ceph-deploy工具安装的,官方已经不建议使用该工具安装,请参考ceph官网。

#准备3台服务器,配置主机名本地解析

vim /etc/hosts

192.168.118.128 ceph1

192.168.118.129 ceph2

192.168.118.130 ceph3

#关闭防火墙等基本操作

systemctl stop firewalld

systemctl disable firewalld

vim /etc/selinux/config

setenforce 0

yum install ntp

systemctl enable ntpd

systemctl start ntpd;

#安装epel源和ceph源

yum install epel-release -y #安装配置epel源

vim /etc/yum.repos.d/ceph.repo #配置ceph源

[ceph]

name=ceph

baseurl=http://mirrors.aliyun.com/ceph/rpm-mimic/el7/x86_64/

enabled=1

gpgcheck=0

priority=1

[ceph-noarch]

name=cephnoarch

baseurl=http://mirrors.aliyun.com/ceph/rpm-mimic/el7/noarch/

enabled=1

gpgcheck=0

priority=1

[ceph-source]

name=Ceph source packages

baseurl=http://mirrors.aliyun.com/ceph/rpm-mimic/el7/SRPMS

enabled=1

gpgcheck=0

priority=1

#ssh免密登录

ssh-keygen -t rsa

ssh-copy-id -i /root/.ssh/id_rsa.pub root@ceph1

ssh-copy-id -i /root/.ssh/id_rsa.pub root@ceph2

ssh-copy-id -i /root/.ssh/id_rsa.pub root@ceph3

ssh root@ceph1

ssh root@ceph2

ssh root@ceph3

#ceph1节点安装部署工具

yum install ceph-deploy -y

mkdir /etc/ceph && cd /etc/ceph #在创建一个目录,用于保存ceph-deploy生成的配置文件

yum install -y python-setuptools #先安装python-setuptools依赖,防止报错

ceph-deploy new node1 #创建一个集群,node1是主机名,不是集群名

yum install ceph ceph-radosgw -y #在node1、node2、node3上安装软件

#在client客户端服务器(如有)安装

yum -y install ceph-common

cd /etc/ceph/ #以下操作的目录均在集群的配置目录下面进行操作

vim ceph.conf

public_network = 192.168.118.0/24 #monitor网络,写网段即可

ceph-deploy mon create-initial #创建初始化monitor监控

ceph-deploy admin node1 node2 node3 #将配置文件信息同步到所有节点

ceph-deploy mon add node2 #加多一个mon

ceph-deploy mon add node3 #再加多一个mon

ceph-deploy mgr create node1 #创建一个mgr,node1是主机名

ceph-deploy mgr create node2 #同理再创建一个node2

ceph-deploy mgr create node3 #再创建一个node3

#列表所有node节点的磁盘,都有sda和sdb两个盘,sdb为我们要加入分布式存储的盘

ceph-deploy disk list node1 #列出node1节点服务器的磁盘

ceph-deploy disk list node2 #列出node2节点服务器的磁盘

ceph-deploy disk list node3 #列出node3节点服务器的磁盘

#zap表示干掉磁盘上的数据,相当于格式化

ceph-deploy disk zap node1 /dev/sdb #格式化node1上的sdb磁盘

ceph-deploy disk zap node2 /dev/sdb #格式化node2上的sdb磁盘

ceph-deploy disk zap node3 /dev/sdb #格式化node3上的sdb磁盘

ceph-deploy osd create --data /dev/sdb node1 #将node1上的sdb磁盘创建为osd

ceph-deploy osd create --data /dev/sdb node2 #继续将node2上的sdb磁盘创建为osd

ceph-deploy osd create --data /dev/sdb node3 #继续将node3上的sdb磁盘创建为osd

ceph集群创建rbd块存储

我们要模拟的是k8s静态pv,所以要使用ceph的rbd块存储,首先ceph集群得有rbd块存储,下面将在ceph集群管理节点ceph1上演示创建rbd块存储。

#首先的有pool池,创建pool池

ceph osd pool create k8s-pool 16 #创建了一个pool池,名称叫做k8s-pool

rbd create k8s --pool k8s-pool --size 1024 #创建了一个名称叫做k8s的rbd块存储,大小为1G

rbd feature disable k8s-pool/k8s object-map fast-diff deep-flatten

#不要执行rbd map k8s-pool/k8s 映射成为设备,否则k8s的pod会报已经使用

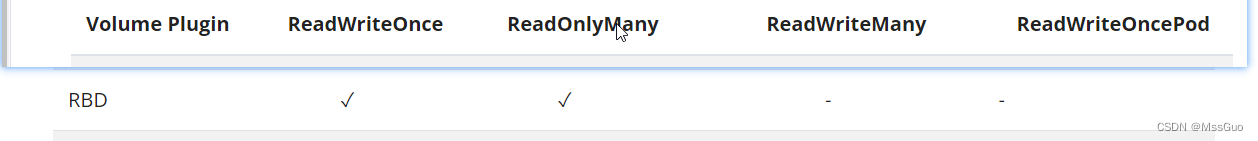

rbd块存储不支持ReadWriteMany

在官网,,如下,我们知道rbd块存储不支持ReadWriteMany挂载。

https://kubernetes.io/docs/concepts/storage/persistent-volumes/#access-modes

k8s配置rbd块存储(静态供给)

创建secret

#在ceph集群上执行这个命令就可以得到keying

[root@node1 ceph]# ceph auth get-key client.admin

AQAgv4ZkabOqHBAAq+8Eh/Q/8raOcRLW/atLxA==

[root@node1 ceph]# cat /etc/ceph/ceph.mon.keyring #或者查看ceph集群配置目录的ceph.mon.keyring 文件也可以

[mon.]

key = AQA8vYZkAAAAABAAhuMfp97xZYf8JgkWlHZsCA==

caps mon = allow *

[root@node1 ceph]#

#上面我们知道ceph集群的keyring了,即client.admin的keying

#客户端要挂载rbd块设备就必须知道这个keying,所以我们要创建secret保存它

echo -n 'AQAgv4ZkabOqHBAAq+8Eh/Q/8raOcRLW/atLxA==' | base64 #对字符串进行加密,由于echo 默认字符串后面换行,所以-n参数很重要,可以去掉换行符

QVFBZ3Y0WmthYk9xSEJBQXErOEVoL1EvOHJhT2NSTFcvYXRMeEE9PQ== #得到秘文

[root@master ceph]# echo 'QVFBZ3Y0WmthYk9xSEJBQXErOEVoL1EvOHJhT2NSTFcvYXRMeEE9PQ==' | base64 --decode #解密验证看看对不对

AQAgv4ZkabOqHBAAq+8Eh/Q/8raOcRLW/atLxA==[root@master ceph]# #没有换行符,正确的

或者直接在ceph集群中进行base64加密亦可:

ceph auth get-key client.admin |base64 #得到的秘文和上面的秘文是一样的

#编写secret文件

vim ceph-secret.yaml

apiVersion: v1

data:

key: QVFBZ3Y0WmthYk9xSEJBQXErOEVoL1EvOHJhT2NSTFcvYXRMeEE9PQ== #上面的秘文

kind: Secret

metadata:

name: ceph-secret

namespace: default

type: kubernetes.io/rbd #这个类型k8s内置的rbd类型

kubectl apply -f ceph-secret.yaml #创建secret

创建pv

#我们先查看pv的rbd块存储的字段有哪些

[root@master ceph]# kubectl explain pv.spec.rbd

FIELDS:

fsType 文件系统类型,"ext4", "xfs",默认ext4,

image The rados image name.必须参数

keyring RBDUser的Keyring文件. 默认是/etc/ceph/keyring.

monitors Ceph monitors,即监视器,必须参数

pool The rados pool name. 不写默认是rbd. More info:

readOnly 是否只读挂载,默认是false. More info:

secretRef 包含RBDUser认证的秘钥,如果提供在覆盖keyring.

user The rados user name. Default is admin。

#编写pv资源清单

vim rbd-pv.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: rdb-pv

namespace: default

spec:

accessModes: #rbd块存储只支持ReadWriteOnce、ReadOnlyMany

- ReadWriteOnce

capacity:

storage: 200M

rbd:

monitors: #ceph集群的monitor的IP+端口,可以写多个,多个可以提供高可用

- '192.168.158.142:6789' #ceph集群的monitor端口就是6789

- '192.168.158.143:6789'

- '192.168.158.144:6789'

pool: k8s-pool #rbd块存储所在的pool,即上面在ceph创建的k8s-pool 池

image: k8s #image其实就是rbd块存储的名称,即上面在ceph集群创建的k8s 块存储,这里只是在ceph集群中叫法不一样而已

fsType: xfs #rbd块设备挂载到pod里面的挂载点文件系统

readOnly: false

user: admin #ceph集群中的rados用户名,默认是admin,我们ceph集群默认就是这个用户名

secretRef:

name: ceph-secret #保存了admin用户的keying 秘钥

persistentVolumeReclaimPolicy: Delete

kubectl apply -f rbd-pv.yaml

创建pvc

[root@master ceph]# cat rbd-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: rbd-pvc

namespace: default

spec:

accessModes:

- ReadWriteOnce #rbd块存储只支持ReadWriteOnce、ReadOnlyMany

resources:

requests:

storage: 200M

storageClassName: "" #写空字符串,表示不使用存储类

kubectl apply -f rbd-pvc.yaml

k8s节点安装客户端依赖包

#由于不知道pod将会调度到哪个节点,所以每个k8s集群都要安装ceph-common

#这个依赖包里面有相应的命令,kubelet会使用到相应的命令进行rbd存储挂载

yum install ceph-common -y

部署pod

vim nginx-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

spec:

replicas: 1 #先设定1个副本

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.18

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

name: web

volumeMounts:

- name: www

mountPath: /usr/share/nginx/html

volumes:

- name: www

persistentVolumeClaim:

claimName: rbd-pvc

kubectl apply -f nginx-deployment.yaml

查看pod

[root@master ceph]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-77cbdf8dc8-sqwt2 1/1 Running 0 9m22s

验证是否持久化

[root@master ceph]# kubectl exec -it nginx-77cbdf8dc8-sqwt2 -- bash #进入pod里面

root@nginx-77cbdf8dc8-sqwt2:/# cd /usr/share/nginx/html

root@nginx-77cbdf8dc8-sqwt2://usr/share/nginx/html# echo "good" >index.html #创建一个首页文件并写点内容

root@nginx-77cbdf8dc8-sqwt2://usr/share/nginx/html# curl localhost:80

good

root@nginx-77cbdf8dc8-sqwt2://usr/share/nginx/html# exit

[root@master ceph]# kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE

nginx-77cbdf8dc8-sqwt2 1/1 Running 0 12m 10.244.1.45 node1

[root@master ceph]# curl 10.244.1.45:80 #正常访问

good

#删除容器

[root@master ceph]# kubectl delete pod nginx-77cbdf8dc8-sqwt2 --grace-period=0 --force

[root@master ceph]# kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE

nginx-77cbdf8dc8-fkxrc 1/1 Running 0 20s 10.244.2.45 node2

[root@master ceph]# curl 10.244.2.45:80 #正常访问,说明持久化成功

good

[root@master ceph]#

#pod扩容副本为2个,验证是否正常

[root@master ceph]# kubectl scale deployment nginx --replicas=2

[root@master ceph]# kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE

nginx-77cbdf8dc8-564pv 0/1 ContainerCreating 0 9s <none> node1

nginx-77cbdf8dc8-fkxrc 1/1 Running 0 118s 10.244.2.45 node2

[root@master ceph]# kubectl describe pod nginx-77cbdf8dc8-564pv

.......

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 17s default-scheduler Successfully assigned default/nginx-77cbdf8dc8-564pv to node1

Warning FailedAttachVolume 17s attachdetach-controller Multi-Attach error for volume "rdb-pv" Volume is already used by pod(s) nginx-77cbdf8dc8-fkxrc

[root@master ceph]#

以上验证说明,只能有一个pod挂载rbd块存储,如果是两个pod或多个,就会报错,原因很简单,官方也说不支持多个pod挂载rbd块存储。

k8s配置rbd块存储(动态供给)

先将上面静态验证的资源全部删除干净。

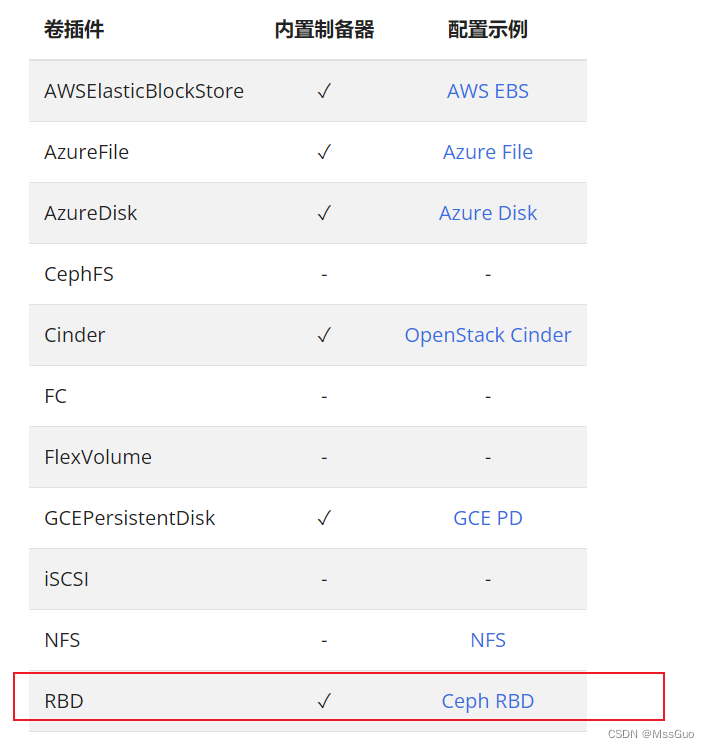

查看官网

#通过查看官网,如下,我们知道,k8s内置了rbd的制备器(Provisioner),所以我们不需要手动创建Provisioner。

https://kubernetes.io/zh-cn/docs/concepts/storage/storage-classes/

ceph集群创建pool

默认你已经有ceph集群了,这里我们要在k8s中的存储类使用ceph的rbd块存储,存储类会动态的创建rbd块,所以我们只需要事先在ceph集群中创建pool池即可:

#在ceph集群中创建pool池

ceph osd pool create k8s-pool 16 #创建了一个pool池,名称叫做k8s-pool

创建secret

#我们要知道ceph集群的keyring,即client.admin的keying

#客户端要挂载rbd块设备就必须知道这个keying,所以我们要创建secret保存它

ceph auth get-key client.admin |base64 #在ceph集群管理节点执行

QVFBZ3Y0WmthYk9xSEJBQXErOEVoL1EvOHJhT2NSTFcvYXRMeEE9PQ== #得到秘文

#编写secret文件

vim ceph-secret.yaml

apiVersion: v1

data:

key: QVFBZ3Y0WmthYk9xSEJBQXErOEVoL1EvOHJhT2NSTFcvYXRMeEE9PQ== #上面的秘文

kind: Secret

metadata:

name: ceph-secret

namespace: default

type: kubernetes.io/rbd #这个类型k8s内置的rbd类型

kubectl apply -f ceph-secret.yaml #创建secret

创建rbd存储类

#官网例子:https://kubernetes.io/zh-cn/docs/concepts/storage/storage-classes/#ceph-rbd

vim rbd-storageclass.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: ceph-rbd-storageclass

provisioner: kubernetes.io/rbd #k8s内置的rbd的sc制备器

allowVolumeExpansion: true #允许自动扩容

parameters: #monitor写多个,写为一行,道号分隔

monitors: 192.168.158.142:6789,192.168.158.143:6789,192.168.158.144:6789

adminId: admin #ceph集群的admin用户

adminSecretName: ceph-secret #秘钥

adminSecretNamespace: default

pool: k8s-pool #ceph中创建好的pool池

userId: admin #这里应该是有两种用户,user这种应该应该是ceph集群创建的普通用户,这里默认是admin

userSecretName: ceph-secret

userSecretNamespace: default

fsType: ext4

imageFormat: "2"

imageFeatures: "layering"

创建pvc

vim rbd-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: rbd-pvc

namespace: default

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 200M

storageClassName: "ceph-rbd-storageclass"

kubectl apply -f rbd-pvc.yaml

pvc一直Pending

[root@master ceph]# kubectl describe pvc rbd-pvc

Warning ProvisioningFailed 9s (x2 over 18s) persistentvolume-controller Failed to provision volume with StorageClass "ceph-rbd-storageclass": failed to create rbd image: executable file not found in $PATH, command output:

#排查发现,该问题早在多年前就已经出现了,而根本原因在于,k8s内置的rbd provisioner存在问题,通过查看controller-manager日志可以看到

[root@master ceph]# kubectl logs kube-controller-manager-master -n kube-system

E0613 03:26:28.072518 1 rbd.go:706] rbd: create volume failed, err: failed to create rbd image: executable file not found in $PATH, command output:

E0613 03:26:28.072599 1 goroutinemap.go:150] Operation for "provision-default/rbd-pvc[2759f972-7d36-44eb-bbdf-d35c049f4f9d]" failed. No retries permitted until 2023-06-13 03:28:30.072573469 +0000 UTC m=+6526.000817530 (durationBeforeRetry 2m2s). Error: failed to create rbd image: executable file not found in $PATH, command output:

I0613 03:26:28.097211 1 event.go:291] "Event occurred" object="default/rbd-pvc" kind="PersistentVolumeClaim" apiVersion="v1" type="Warning" reason="ProvisioningFailed" message="Failed to provision volume with StorageClass "ceph-rbd-storageclass": failed to create rbd image: executable file not found in $PATH, command output: "

#解决办法就是创建新的provisioner,不在使用k8s内置的rbd provisioner。

创建存储制备器provisioner

官方文档:https://github.com/kubernetes-retired/external-storage/tree/master/ceph/rbd/deploy

官方提供两种安装provisioner的方法,一种是没有rbac,一种是由rbac,任选一种即可

方法一(no-rbac):

vim deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: rbd-provisioner

spec:

replicas: 1

selector:

matchLabels:

app: rbd-provisioner

strategy:

type: Recreate

template:

metadata:

labels:

app: rbd-provisioner

spec:

containers:

- name: rbd-provisioner

image: "quay.io/external_storage/rbd-provisioner:latest"

env:

- name: PROVISIONER_NAME

value: ceph.com/rbd #记住这个值,这个是provisioner制备器的名称

kubectl apply -f deployment.yaml

方法二(rbac):

[root@master rbac]# vim clusterrole.yaml

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: rbd-provisioner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

- apiGroups: [""]

resources: ["services"]

resourceNames: ["kube-dns","coredns"]

verbs: ["list", "get"]

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

[root@master rbac]#

[root@master rbac]# vim clusterrolebinding.yaml

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: rbd-provisioner

subjects:

- kind: ServiceAccount

name: rbd-provisioner

namespace: default #命名空间可以自行修改

roleRef:

kind: ClusterRole

name: rbd-provisioner

apiGroup: rbac.authorization.k8s.io

[root@master rbac]#

[root@master rbac]# vim role.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: rbd-provisioner

rules:

- apiGroups: [""]

resources: ["secrets"]

verbs: ["get"]

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

[root@master rbac]#

[root@master rbac]# cat rolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: rbd-provisioner

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: rbd-provisioner

subjects:

- kind: ServiceAccount

name: rbd-provisioner

namespace: default #命名空间可以自行修改

[root@master rbac]#

[root@master rbac]# vim serviceaccount.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: rbd-provisioner

[root@master rbac]#

[root@master rbac]# vim deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: rbd-provisioner

spec:

replicas: 1

selector:

matchLabels:

app: rbd-provisioner

strategy:

type: Recreate

template:

metadata:

labels:

app: rbd-provisioner

spec:

containers:

- name: rbd-provisioner

image: "quay.io/external_storage/rbd-provisioner:latest"

env:

- name: PROVISIONER_NAME

value: ceph.com/rbd #记住这个值,这个是provisioner制备器的名称

serviceAccount: rbd-provisioner

[root@master rbac]#

kubectl apply -f ./rabc

重存修改storageclass的provisioner

[root@master ceph]# cat rbd-storageclass.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: ceph-rbd-storageclass

provisioner: ceph.com/rbd #改为制备器的名称,注意不是deployment的名称

allowVolumeExpansion: true

parameters:

monitors: 192.168.158.142:6789,192.168.158.143:6789,192.168.158.144:6789

adminId: admin

adminSecretName: ceph-secret

adminSecretNamespace: default

pool: k8s-pool

userId: admin

userSecretName: ceph-secret

userSecretNamespace: default

fsType: ext4

imageFormat: "2"

imageFeatures: "layering"

[root@master ceph]#

kubectl delete rbd-storageclass.yaml;

kubectl apply -f rbd-storageclass.yaml;

#然后创建pvc,查看正常,已经马上创建了pv

继续创建pvc

kubectl delete-f rbd-pvc.yaml

kubectl apply -f rbd-pvc.yaml

[root@master ceph]# kubectl get -f rbd-pvc.yaml #可以看到存储类已经正常创建了pv

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

rbd-pvc Bound pvc-ab63babe-c743-4ea4-8d59-9944dc9ac000 191Mi RWO ceph-rbd-storageclass 8m44s

[root@master ceph]#

#我们回到ceph集群的管理节点看看

[root@node1 ~]# rbd ls k8s-pool #可以看到,已经创建了image,即rbd块

kubernetes-dynamic-pvc-d2ce2db1-09a7-11ee-a9cd-72b4f5f91329 #(名字不用管,查看rbd-provisioner的日志可以看到创建rbd image的日志信息)

[root@node1 ~]#

创建deployment验证

[root@master ceph]# cat nginx-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.18

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

name: web

volumeMounts:

- name: www

mountPath: /usr/share/nginx/html

volumes:

- name: www

persistentVolumeClaim:

claimName: rbd-pvc

[root@master ceph]#

[root@master ceph]# kubectl apply -f nginx-deployment.yaml

创建验证

[root@master ceph]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-77cbdf8dc8-nsdwn 1/1 Running 0 2m20s

[root@master ceph]# kubectl exec -it nginx-77cbdf8dc8-nsdwn -- bash

root@nginx-77cbdf8dc8-nsdwn:/# df -Th

Filesystem Type Size Used Avail Use% Mounted on

/dev/rbd0 ext4 181M 1.6M 176M 1% /usr/share/nginx/html

root@nginx-77cbdf8dc8-nsdwn:/# echo "good" >>/usr/share/nginx/html/index.html

root@nginx-77cbdf8dc8-nsdwn:/# curl localhost:80

good

root@nginx-77cbdf8dc8-nsdwn:/# exit

[root@master ceph]# kubectl describe pod nginx-77cbdf8dc8-nsdwn | grep -i ip

IP: 10.244.1.47

[root@master ceph]# curl 10.244.1.47:80

good

[root@master ceph]# kubectl delete pod nginx-77cbdf8dc8-nsdwn

[root@master ceph]# kubectl describe pod nginx-77cbdf8dc8-dczhq | grep -i ip

IP: 10.244.2.48

[root@master ceph]# curl 10.244.2.48:80

good

[root@master ceph]#

#验证成功,rbd不能有2个或多个pod同时读写挂载这里就不在验证了

总结

1、首先得有ceph集群;

2、k8s集群节点安装yum -y install cepe-common;

3、静态供给的话,首先得在ceph集群创建pool和rbd块存储(也称image),然后创建secret,secret主要是保存keying;

4、创建pv、pvc、pod;

5、动态供给的话,首先得在ceph集群创建pool(不用创建rbd块存储即image,存储类会自动创建),同理创建secret,secret主要是保存keying;

6、创建存储制备器,由于k8s内置的存储制备器有点问题,所以需要根据官网手动创建一个rbd存储制备器;

7、创建存储类,使用上面创建的的存储制备器和secret;

8、创建pvc,存储类就会自动创建pv,在ceph集群管理节点上查看rbd 的image,如命令rbd ls k8s-pool,就能看到自动创建的image了。

9、pod挂载pvc使用;

10、由于rbd不能使用ReadWriteMany的pv访问模式,所以rbd块存储不适合多个pod对一个pvc同读同写的应用场景,rbd更合适StatefulSet创建的pod。因为StatefulSet创建的pod,每个pod都独占一个pv,这正好合适rbd存储。

风语者!平时喜欢研究各种技术,目前在从事后端开发工作,热爱生活、热爱工作。

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。...

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。... U8W/U8W-Mini使用与常见问题解决

U8W/U8W-Mini使用与常见问题解决 stm32使用HAL库配置串口中断收发数据(保姆级教程)

stm32使用HAL库配置串口中断收发数据(保姆级教程) 分享几个国内免费的ChatGPT镜像网址(亲测有效)

分享几个国内免费的ChatGPT镜像网址(亲测有效) Allegro16.6差分等长设置及走线总结

Allegro16.6差分等长设置及走线总结