您现在的位置是:首页 >学无止境 >K8S二进制单节点 一键部署K8S_V1.21.x网站首页学无止境

K8S二进制单节点 一键部署K8S_V1.21.x

简介K8S二进制单节点 一键部署K8S_V1.21.x

1、安装前注意事项

安装shell脚本在文章最后位置

1、提前配置静态IP 把脚本的IP 192.168.1.31 换成你的IP

2、创建安装包路径 /home/software/shell 所有的tar包 shell脚本 放在这里

3、免密登录配置所有节点

提前下载镜像如下:

[root@master01 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

calico/node v3.15.1 1470783b1474 2 years ago 262MB

calico/pod2daemon-flexvol v3.15.1 a696ebcb2ac7 2 years ago 112MB

calico/cni v3.15.1 2858353c1d25 2 years ago 217MB

calico/kube-controllers v3.15.1 8ed9dbffe350 2 years ago 53.1MB

kubernetesui/dashboard v2.0.0 8b32422733b3 3 years ago 222MB

kubernetesui/metrics-scraper v1.0.4 86262685d9ab 3 years ago 36.9MB

lizhenliang/coredns 1.6.7 67da37a9a360 3 years ago 43.8MB

busybox 1.28.4 8c811b4aec35 4 years ago 1.15MB

lizhenliang/pause-amd64 3.0 99e59f495ffa 6 years ago 747kB

软件安装包需下载如下:

1)下载etcd二进制文件

地址:https://github.com/etcd-io/etcd/releases/download/v3.4.9/etcd-v3.4.9-linux-amd64.tar.gz

2) 下载 Docker二进制文件

这里使用Docker作为容器引擎,也可以换成别的,例如containerd

下载地址:https://download.docker.com/linux/static/stable/x86_64/docker-19.03.9.tgz

3) 下载 kuberneteser二进制文件

下载地址:https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.20.md

注:打开链接你会发现里面有很多包,下载一个server包就够了,包含了Master和Worker Node二进制文件

执行前提醒

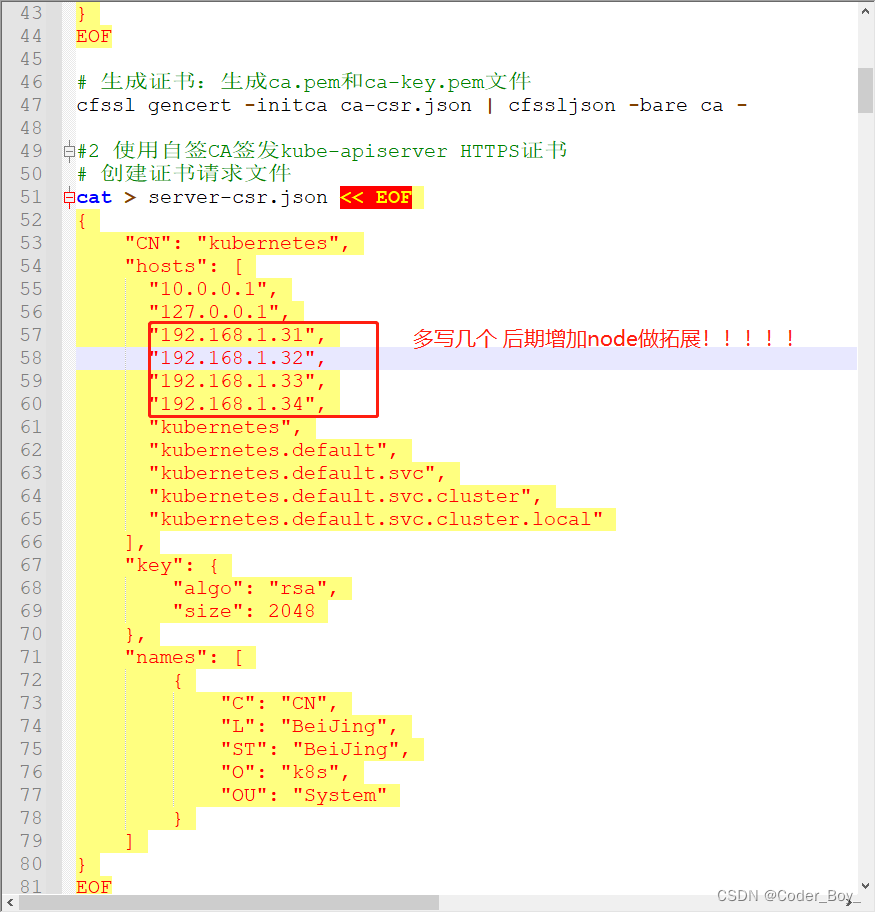

注意:install_k8s_base.sh中的文件hosts字段中IP为所有Master/LB/VIP IP,一个都不能少!为了方便后期扩容可以多写几个预留的IP

这么有几个IP 就可以后期增加几个node节点!! 我这里可以有4个节点。

ETCD 默认安装单节点,可以修改配置增加节点,需要每个节点都按照 不然无法启动ETCD集群!!

2、开启安装步骤:

最可能出现的异常问题都是证书和网络相关的,这也是K8S最核心最难的部分,参考官方文档、执行日志、组件的版本兼容性、配置参数、系统参数等分析

依次执行如下脚本:

sh pre_env.sh

sh install_docker.sh

sh install_etcd.sh

sh install_k8s_base.sh

sh install_net_plugin.sh

sh install_coredns_board.sh

/home/software/shell/board_token 文件里是dashboad的token信息 获取后访问即可

如果需要卸载网络插件 执行

sh clear_net_plugin.sh

静态IP 也可以修改static_ip_config.sh 里面的IP信息 后执行

sh static_ip_config.sh

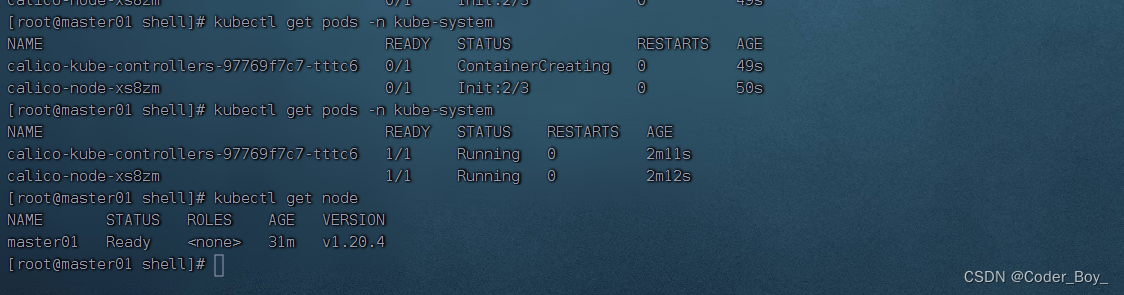

3、 最终效果

Node节点情况

Pod情况

内存使用情况:

我是16GRAM 最终部署K8S初始状态后,内存占如下:

我是增加了一个node节点后的

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-rDvpeWjT-1683041839036)(C:UserslenovoDocumentsWeChat Filesdk1227826554FileStorageTemp1683041298257.png)]](https://img-blog.csdnimg.cn/ddebf77520d548c4b9a2dcc20a76eba3.png)

4、常见问题

问题1:没有给apiserver授权访问kubelet组件

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-Y6KNAEhd-1683041839037)(C:UserslenovoAppDataLocalTempWeChat Files72531db0d874899090cba66b8ff2cb7.png)]](https://img-blog.csdnimg.cn/9adce64c71f54fc7a18a0c86aea43dc0.png)

确认:ClusterRole 有没有 增加 - pods/log 的查询权限

cat > apiserver-to-kubelet-rbac.yaml << EOF

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

- pods/log

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kubernetes

EOF

kubectl apply -f apiserver-to-kubelet-rbac.yaml

5、Shell 部署脚本

pre_env.sh

#/bin/bash

# 1、关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

echo "关闭防火墙!"

# 2、关闭selinux

setenforce 0 # 临时

sed -i 's/enforcing/disabled/' /etc/selinux/config # 永久

echo "关闭selinux !"

# 3、关闭swap

swapoff -a # 临时

sed -ri 's/.*swap.*/#&/' /etc/fstab # 永久

echo "关闭swap !"

# 4、根据规划设置主机名

hostnamectl set-hostname master01

# 5、在master添加hosts

cat >> /etc/hosts << EOF

192.168.1.31 master01

192.168.1.32 master02

192.168.1.33 node01

192.168.1.34 node02

EOF

echo "在master添加hosts !"

# 6、将桥接的IPv4流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system # 生效

# 7、时间同步

yum install ntpdate -y

ntpdate time.windows.com

install_docker.sh

#/bin/bash

# 1.解压二进制软件包

tar zxvf docker-19.03.9.tgz

mv docker/* /usr/bin

echo "解压success !"

# 2.systemd管理docker

cat > /usr/lib/systemd/system/docker.service << EOF

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

ExecStart=/usr/bin/dockerd

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

EOF

# 3.创建配置文件

mkdir /etc/docker

cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://b9pmyelo.mirror.aliyuncs.com"]

}

EOF

# 4.启动并设置开机启动

systemctl daemon-reload

systemctl start docker

systemctl enable docker

docker version

install_etcd.sh

#/bin/bash

##下载证书cfssl

#mkdir cfssl && cd cfssl/

cd /home/software/shell

chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64

mv -f cfssl_linux-amd64 /usr/local/bin/cfssl

mv -f cfssljson_linux-amd64 /usr/local/bin/cfssljson

mv -f cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo

#生成Etcd证书

#1 自签证书颁发机构(CA)

# 1、创建工作目录

mkdir -p ~/TLS/{etcd,k8s} && cd ~/TLS/etcd

# 2、自签CA

cat > ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json << EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

# 3、生成证书:会生成ca.pem和ca-key.pem文件

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

## 使用自签CA签发Etcd Https证书

# 创建证书请求文件

cat > server-csr.json << EOF

{

"CN": "etcd",

"hosts": [

"192.168.1.31"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

## 生成证书,会生成server.pem和server-key.pem文件

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

#1 创建工作目录并解压二进制包

cd /home/software/shell

mkdir /opt/etcd/{bin,cfg,ssl} -p

tar zxvf etcd-v3.4.9-linux-amd64.tar.gz

mv etcd-v3.4.9-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/

#2 创建etcd配置文件

cat > /opt/etcd/cfg/etcd.conf << EOF

#[Member]

ETCD_NAME="etcd-1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.1.31:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.1.31:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.1.31:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.1.31:2379"

ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.1.31:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

#3 systemd管理etcd

cat > /usr/lib/systemd/system/etcd.service << EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/etcd/cfg/etcd.conf

ExecStart=/opt/etcd/bin/etcd

--cert-file=/opt/etcd/ssl/server.pem

--key-file=/opt/etcd/ssl/server-key.pem

--peer-cert-file=/opt/etcd/ssl/server.pem

--peer-key-file=/opt/etcd/ssl/server-key.pem

--trusted-ca-file=/opt/etcd/ssl/ca.pem

--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem

--logger=zap

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

#4 拷贝生成的证书至指定位置

# 把刚才生成的证书拷贝到配置文件中的路径

cp ~/TLS/etcd/ca*pem ~/TLS/etcd/server*pem /opt/etcd/ssl/

#5 启动并设置开机启动

systemctl daemon-reload

systemctl start etcd

systemctl enable etcd

systemctl status etcd

#6 查看集群状态

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.1.31:2379" endpoint health --write-out=table

install_k8s_base.sh

#/bin/bash

#1 自签证书签发机构(CA)

cd ~/TLS/k8s

cat > ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json << EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

# 生成证书:生成ca.pem和ca-key.pem文件

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#2 使用自签CA签发kube-apiserver HTTPS证书

# 创建证书请求文件

cat > server-csr.json << EOF

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.1.31",

"192.168.1.32",

"192.168.1.33",

"192.168.1.34",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

#3 # 生成证书,生成server.pem和server-key.pem

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

##部署kube-apiserver

#1 下载并解压二进制软件包

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

tar zxvf kubernetes-server-linux-amd64.tar.gz

cd kubernetes/server/bin

cp kube-apiserver kube-scheduler kube-controller-manager /opt/kubernetes/bin

cp kubectl /usr/bin/

#2 创建配置文件

cat > /opt/kubernetes/cfg/kube-apiserver.conf << EOF

KUBE_APISERVER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--etcd-servers=https://192.168.1.31:2379 \

--bind-address=192.168.1.31 \

--secure-port=6443 \

--advertise-address=192.168.1.31 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth=true \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-32767 \

--kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \

--kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--service-account-signing-key-file=/opt/kubernetes/ssl/server-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \

--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \

--proxy-client-cert-file=/opt/kubernetes/ssl/server.pem \

--proxy-client-key-file=/opt/kubernetes/ssl/server-key.pem \

--requestheader-allowed-names=kubernetes \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-group-headers=X-Remote-Group \

--requestheader-username-headers=X-Remote-User \

--enable-aggregator-routing=true \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"

EOF

#3 拷贝生成的证书

# 把刚才生成的证书拷贝到配置文件中的路径

cp ~/TLS/k8s/ca*pem ~/TLS/k8s/server*pem /opt/kubernetes/ssl/

#4 创建token文件

# 格式:token,用户名,UID,用户组

cat > /opt/kubernetes/cfg/token.csv << EOF

`head -c 16 /dev/urandom | od -An -t x | tr -d ' '`,kubelet-bootstrap,10001,"system:node-bootstrapper"

EOF

#5 systemd管理apiserver

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

#6 启动并设置开机启动

systemctl daemon-reload

systemctl start kube-apiserver

systemctl enable kube-apiserver

systemctl status kube-apiserver

##部署kube-controller-manager

#1 创建配置文件

cat > /opt/kubernetes/cfg/kube-controller-manager.conf << EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--leader-elect=true \

--kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \

--bind-address=127.0.0.1 \

--allocate-node-cidrs=true \

--cluster-cidr=10.244.0.0/16 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--cluster-signing-duration=87600h0m0s"

EOF

#2 生成kubeconfig文件

# 切换工作目录

cd ~/TLS/k8s

# 创建证书请求文件

cat > kube-controller-manager-csr.json << EOF

{

"CN": "system:kube-controller-manager",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

# 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

# 生成kubeconfig文件

KUBE_CONFIG="/opt/kubernetes/cfg/kube-controller-manager.kubeconfig"

KUBE_APISERVER="https://192.168.1.31:6443"

kubectl config set-cluster kubernetes

--certificate-authority=/opt/kubernetes/ssl/ca.pem

--embed-certs=true

--server=${KUBE_APISERVER}

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-controller-manager

--client-certificate=./kube-controller-manager.pem

--client-key=./kube-controller-manager-key.pem

--embed-certs=true

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default

--cluster=kubernetes

--user=kube-controller-manager

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

#3 systemd管理controller-manager

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/opt/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

#4 启动并设置开机启动

systemctl daemon-reload

systemctl start kube-controller-manager

systemctl enable kube-controller-manager

systemctl status kube-controller-manager

##部署kube-scheduler

#1 创建配置文件

cat > /opt/kubernetes/cfg/kube-scheduler.conf << EOF

KUBE_SCHEDULER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--leader-elect \

--kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \

--bind-address=127.0.0.1"

EOF

#2 生成kubeconfig文件

# 切换工作目录

cd ~/TLS/k8s

# 创建证书请求文件

cat > kube-scheduler-csr.json << EOF

{

"CN": "system:kube-scheduler",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

# 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

#3 生成kubeconfig文件

KUBE_CONFIG="/opt/kubernetes/cfg/kube-scheduler.kubeconfig"

KUBE_APISERVER="https://192.168.1.31:6443"

kubectl config set-cluster kubernetes

--certificate-authority=/opt/kubernetes/ssl/ca.pem

--embed-certs=true

--server=${KUBE_APISERVER}

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-scheduler

--client-certificate=./kube-scheduler.pem

--client-key=./kube-scheduler-key.pem

--embed-certs=true

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default

--cluster=kubernetes

--user=kube-scheduler

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

#4 systemd管理scheduler

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-scheduler.conf

ExecStart=/opt/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

#5 启动并设置开机启动

systemctl daemon-reload

systemctl start kube-scheduler

systemctl enable kube-scheduler

systemctl status kube-scheduler

##查看集群状态

#1 生成kubectl连接集群的证书:

cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

#2 生成kubeconfig文件:

mkdir /root/.kube

KUBE_CONFIG="/root/.kube/config"

KUBE_APISERVER="https://192.168.1.31:6443"

kubectl config set-cluster kubernetes

--certificate-authority=/opt/kubernetes/ssl/ca.pem

--embed-certs=true

--server=${KUBE_APISERVER}

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials cluster-admin

--client-certificate=./admin.pem

--client-key=./admin-key.pem

--embed-certs=true

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default

--cluster=kubernetes

--user=cluster-admin

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

#3 通过kubectl工具查看当前集群组件状态

kubectl get cs

echo "===========kubectl get cs==SUCCESS===================================>"

##授权kubelet-bootstrap用户允许请求证书

kubectl create clusterrolebinding kubelet-bootstrap

--clusterrole=system:node-bootstrapper

--user=kubelet-bootstrap

##部署Worker Node

#1 创建工作目录并拷贝二进制文件

# 在所有worker node创建工作目录(master已创建,新加入节点需要创建)

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

# 从解压的k8s server压缩包中拷贝文件

cd /home/software/shell/kubernetes/server/bin

cp kubelet kube-proxy /opt/kubernetes/bin

##2 部署kubelet

#1 创建配置文件

cat > /opt/kubernetes/cfg/kubelet.conf << EOF

KUBELET_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--hostname-override=master01 \

--network-plugin=cni \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet-config.yml \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=lizhenliang/pause-amd64:3.0"

EOF

#2 配置参数文件

cat > /opt/kubernetes/cfg/kubelet-config.yml << EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- 10.0.0.2

clusterDomain: cluster.local

failSwapOn: false

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /opt/kubernetes/ssl/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

maxOpenFiles: 1000000

maxPods: 110

EOF

echo "===========ALL==SUCCESS===================================>"

chmod -R 777 /opt/kubernetes/cfg/token.csv

STR=`cat /opt/kubernetes/cfg/token.csv`

TOKEN_STR=`echo ${STR%%,*}`

TOKEN=$TOKEN_STR

#3 生成kubelet初次加入集群引导kubeconfig文件 # 与token.csv里保持一致

KUBE_CONFIG="/opt/kubernetes/cfg/bootstrap.kubeconfig"

KUBE_APISERVER="https://192.168.1.31:6443"

TOKEN="c47ffb939f5ca36231d9e3121a252940"

# 生成 kubelet bootstrap kubeconfig 配置文件

kubectl config set-cluster kubernetes

--certificate-authority=/opt/kubernetes/ssl/ca.pem

--embed-certs=true

--server=${KUBE_APISERVER}

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials "kubelet-bootstrap"

--token=${TOKEN}

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default

--cluster=kubernetes

--user="kubelet-bootstrap"

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

#4 systemd管理kubelet

cat > /usr/lib/systemd/system/kubelet.service << EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet.conf

ExecStart=/opt/kubernetes/bin/kubelet $KUBELET_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

#5 启动并设置开机启动

systemctl daemon-reload

systemctl start kubelet

systemctl enable kubelet

systemctl status kubelet

#6 批准kubelet证书申请并加入集群

# 查看kubelet证书请求

kubectl get csr

### 批准申请

echo `kubectl get csr|grep Pending| awk '{print $1}'`> log

APPLY_NODE=`cat log`

kubectl certificate approve $APPLY_NODE

# 查看节点(由于网络插件还没有部署,节点会没有准备就绪 NotReady)

kubectl get node

##部署kube-proxy

#1 创建配置文件

cat > /opt/kubernetes/cfg/kube-proxy.conf << EOF

KUBE_PROXY_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--config=/opt/kubernetes/cfg/kube-proxy-config.yml"

EOF

#2 配置参数文件

cat > /opt/kubernetes/cfg/kube-proxy-config.yml << EOF

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

metricsBindAddress: 0.0.0.0:10249

clientConnection:

kubeconfig: /opt/kubernetes/cfg/kube-proxy.kubeconfig

hostnameOverride: master01

clusterCIDR: 10.0.0.0/24

EOF

#3 生成kube-proxy.kubeconfig文件

# 生成kube-proxy证书:

# 切换工作目录

cd ~/TLS/k8s

# 创建证书请求文件

cat > kube-proxy-csr.json << EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

# 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

# 生成kubeconfig文件

KUBE_CONFIG="/opt/kubernetes/cfg/kube-proxy.kubeconfig"

KUBE_APISERVER="https://192.168.1.31:6443"

kubectl config set-cluster kubernetes

--certificate-authority=/opt/kubernetes/ssl/ca.pem

--embed-certs=true

--server=${KUBE_APISERVER}

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-proxy

--client-certificate=./kube-proxy.pem

--client-key=./kube-proxy-key.pem

--embed-certs=true

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default

--cluster=kubernetes

--user=kube-proxy

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

#4 systemd管理kube-proxy

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-proxy.conf

ExecStart=/opt/kubernetes/bin/kube-proxy $KUBE_PROXY_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

# 启动并设置开机启动

systemctl daemon-reload

systemctl start kube-proxy

systemctl enable kube-proxy

systemctl status kube-proxy

install_net_plugin.sh

#/bin/bash

# 部署Calico

kubectl apply -f calico.yaml

kubectl get pods -n kube-system

# 等Calico Pod都Running,节点也会准备就绪

kubectl get node

# 授权apiserver访问kubelet

# 应用场景:例如kubectl logs

cat > apiserver-to-kubelet-rbac.yaml << EOF

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

- pods/log

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kubernetes

EOF

kubectl apply -f apiserver-to-kubelet-rbac.yaml

install_coredns_board.sh

#/bin/bash

# 部署

kubectl apply -f kubernetes-dashboard.yaml

# 查看部署

kubectl get pods,svc -n kubernetes-dashboard

# 创建service account并绑定默认cluster-admin管理员集群角色:

kubectl create serviceaccount dashboard-admin -n kube-system

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}') > board_token

# 部署CoreDNS

#CoreDNS用于集群内部Service名称解析:

kubectl apply -f coredns.yaml

kubectl get pods -n kube-system

#DNS解析测试:

#kubectl run -it --rm dns-test --image=busybox:1.28.4 sh

#

#If you don't see a command prompt, try pressing enter.

#/ # nslookup kubernetes

#Server: 10.0.0.2

#Address 1: 10.0.0.2 kube-dns.kube-system.svc.cluster.local

#

#Name: kubernetes

#Address 1: 10.0.0.1 kubernetes.default.svc.cluster.local

static_ip_config.sh

#/bin/bash

cat >> /etc/sysconfig/network-scripts/ifcfg-enp0s3 << EOF

IPADDR=192.168.1.32

NETMASK=255.255.255.0

GATEWAY=192.168.1.1

DNS1=8.8.8.8

EOF

sed -i 's/dhcp/static/' /etc/sysconfig/network-scripts/ifcfg-enp0s3

systemctl restart network

clear_net_plugin.sh

#/bin/bash

#1.kubectl delete -f calico.yaml

#2.查看带tunl0的网卡

ip addr show

#3. 查看带tunl0的网卡

modprobe -v -r ipip

#4.删除/etc/cni/net.d/下的所有文件

rm -rf /etc/cni/net.d/*

ssh_auto.sh

#!/bin/bash

#!/bin/bash

#------------------------------------------#

# FileName: ssh_auto.sh

# Revision: 1.1.0

# Date: 2017-07-14 04:50:33

# Author: vinsent

# Email: hyb_admin@163.com

# Website: www.vinsent.cn

# Description: This script can achieve ssh password-free login,

# and can be deployed in batches, configuration

#------------------------------------------#

# Copyright: 2017 vinsent

# License: GPL 2+

#------------------------------------------#

yum -y install expect

[ ! -f /root/.ssh/id_rsa.pub ] && ssh-keygen -t rsa -p '' &>/dev/null # 密钥对不存在则创建密钥

while read line;do

ip=`echo $line | cut -d " " -f1` # 提取文件中的ip

user_name=`echo $line | cut -d " " -f2` # 提取文件中的用户名

pass_word=`echo $line | cut -d " " -f3` # 提取文件中的密码

expect <<EOF

spawn ssh-copy-id -i /root/.ssh/id_rsa.pub $user_name@$ip # 复制公钥到目标主机

expect {

"yes/no" { send "yes

";exp_continue} # expect 实现自动输入密码

"password" { send "$pass_word

"}

}

expect eof

EOF

done < /root/host_ip.txt # 读取存储ip的文件

pscp.pssh -h /root/host_ip.txt /root/your_scripts.sh /root # 推送你在目标主机进行的部署配置

pssh -h /root/host_ip.txt -i bash /root/your_scripts.sh # 进行远程配置,执行你的配置脚本

主机列表 文件 host_ip.txt

192.168.1.31 root 1234567

192.168.1.33 root 123456

风语者!平时喜欢研究各种技术,目前在从事后端开发工作,热爱生活、热爱工作。

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。...

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。... U8W/U8W-Mini使用与常见问题解决

U8W/U8W-Mini使用与常见问题解决 stm32使用HAL库配置串口中断收发数据(保姆级教程)

stm32使用HAL库配置串口中断收发数据(保姆级教程) 分享几个国内免费的ChatGPT镜像网址(亲测有效)

分享几个国内免费的ChatGPT镜像网址(亲测有效) Allegro16.6差分等长设置及走线总结

Allegro16.6差分等长设置及走线总结