您现在的位置是:首页 >技术交流 >深度学习第J8周:Inception v1算法实战与解析网站首页技术交流

深度学习第J8周:Inception v1算法实战与解析

目录

🍨 本文为[🔗365天深度学习训练营]内部限免文章(版权归 *K同学啊* 所有)

🍖 作者:[K同学啊]

📌 本周任务:

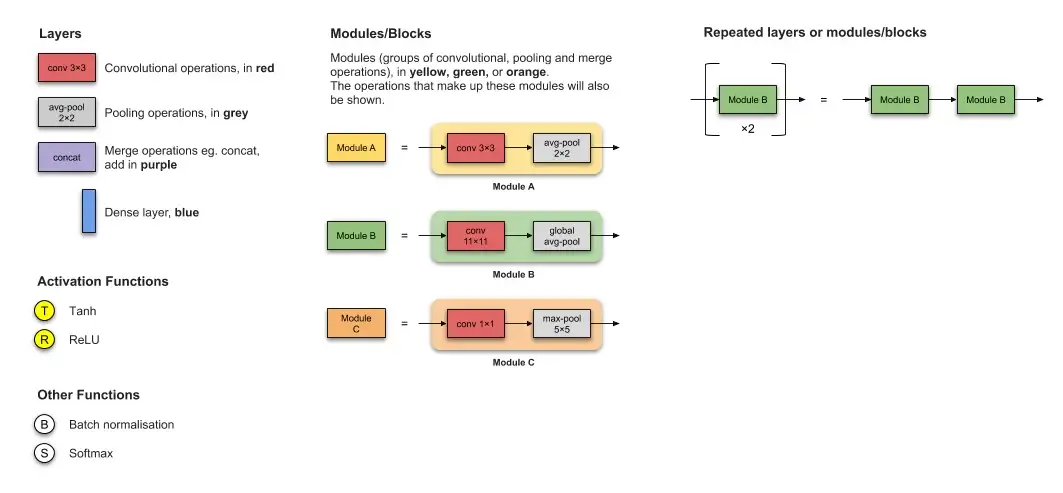

1了解并学习图2中的卷积层运算量的计算过程(🏐储备知识->卷积层运算量的计算,有我的推导过程,建议先自己手动推导,然后再看)

2了解并学习卷积层的并行结构与1x1卷积核部分内容(重点)

3尝试根据模型框架图写入相应的pytorch代码,并使用Inception v1完成猴痘病识别

一、Inception v1

Going deeper with convolutions.pdf 不能打开就谷粉学术搜索下载

1.简介

Inception v1是一种深度卷积神经网络,它在ILSVRC14比赛中表现出最佳的分类和检测性能[1]。

该网络的最大特点是使用了Inception模块,该模块通过多种不同的卷积核来提取不同大小的特征图,并将这些特征图拼接在一起,从而同时考虑了不同尺度下的特征信息,提高了网络的准确性和泛化能力。

在Inception v1中,Inception模块一般由1x1、3x3和5x5的卷积层以及一个最大池化层组成,同时还会在最后加上一个1x1的卷积层来减少通道数,从而避免参数过多的问题[2]。Inception v1是后续Inception系列网络的基础,为深度学习领域的发展做出了重要贡献。

2. 算法结构

注:另外增加了两个辅助分支,作用有两点,一是为了避免梯度消失,用于向前传导梯度。反向传播时如果有一层求导为0,链式求导结果则为0。二是将中间某一层输出用作分类,起到模型融合作用,实际测试时,这两个辅助softmax分支会被去掉,在后续模型的发展中,该方法被采用较少,可以直接绕过,重点学习卷积层的并行结构与1x1卷积核部分的内容即可。

二、pytorch代码复现

1.前期准备

大致模板和以前一样,以后不再详细列,样例可见:深度学习第J4周:ResNet与DenseNet结合探索_牛大了2023的博客-CSDN博客

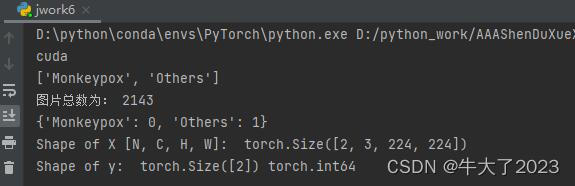

配置gpu+导入数据集

import os,PIL,random,pathlib

import torch

import torch.nn as nn

import torchvision.transforms as transforms

import torchvision

from torchvision import transforms, datasets

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(device)

data_dir = './data/'

data_dir = pathlib.Path(data_dir)

data_paths = list(data_dir.glob('*'))

classeNames = [str(path).split("\")[1] for path in data_paths]

print(classeNames)

image_count = len(list(data_dir.glob('*/*')))

print("图片总数为:", image_count)数据预处理+划分数据集

train_transforms = transforms.Compose([

transforms.Resize([224, 224]), # 将输入图片resize成统一尺寸

# transforms.RandomHorizontalFlip(), # 随机水平翻转

transforms.ToTensor(), # 将PIL Image或numpy.ndarray转换为tensor,并归一化到[0,1]之间

transforms.Normalize( # 标准化处理-->转换为标准正太分布(高斯分布),使模型更容易收敛

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]) # 其中 mean=[0.485,0.456,0.406]与std=[0.229,0.224,0.225] 从数据集中随机抽样计算得到的。

])

test_transform = transforms.Compose([

transforms.Resize([224, 224]), # 将输入图片resize成统一尺寸

transforms.ToTensor(), # 将PIL Image或numpy.ndarray转换为tensor,并归一化到[0,1]之间

transforms.Normalize( # 标准化处理-->转换为标准正太分布(高斯分布),使模型更容易收敛

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]) # 其中 mean=[0.485,0.456,0.406]与std=[0.229,0.224,0.225] 从数据集中随机抽样计算得到的。

])

total_data = datasets.ImageFolder("./data/", transform=train_transforms)

print(total_data.class_to_idx)

train_size = int(0.8 * len(total_data))

test_size = len(total_data) - train_size

train_dataset, test_dataset = torch.utils.data.random_split(total_data, [train_size, test_size])

batch_size = 32

train_dl = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size,

shuffle=True,

num_workers=0)

test_dl = torch.utils.data.DataLoader(test_dataset,

batch_size=batch_size,

shuffle=True,

num_workers=0)

for X, y in test_dl:

print("Shape of X [N, C, H, W]: ", X.shape)

print("Shape of y: ", y.shape, y.dtype)

break

2.代码复现

class inception_block(nn.Module):

def __init__(self, in_channels, ch1x1, ch3x3red, ch3x3, ch5x5red, ch5x5, pool_proj):

super().__init__()

# 1x1 conv branch

self.branch1 = nn.Sequential(

nn.Conv2d(in_channels, ch1x1, kernel_size=1),

nn.BatchNorm2d(ch1x1),

nn.ReLU(inplace=True)

)

# 1x1 conv -> 3x3 conv branch

self.branch2 = nn.Sequential(

nn.Conv2d(in_channels, ch3x3red, kernel_size=1),

nn.BatchNorm2d(ch3x3red),

nn.ReLU(inplace=True),

nn.Conv2d(ch3x3red, ch3x3, kernel_size=3, padding=1),

nn.BatchNorm2d(ch3x3),

nn.ReLU(inplace=True)

)

# 1x1 conv -> 5x5 conv branch

self.branch3 = nn.Sequential(

nn.Conv2d(in_channels, ch5x5red, kernel_size=1),

nn.BatchNorm2d(ch5x5red),

nn.ReLU(inplace=True),

nn.Conv2d(ch5x5red, ch5x5, kernel_size=3, padding=1),

nn.BatchNorm2d(ch5x5),

nn.ReLU(inplace=True)

)

self.branch4 = nn.Sequential(

nn.MaxPool2d(kernel_size=3, stride=1, padding=1),

nn.Conv2d(in_channels, pool_proj, kernel_size=1),

nn.BatchNorm2d(pool_proj),

nn.ReLU(inplace=True)

)

def forward(self, x):

branch1_output = self.branch1(x)

branch2_output = self.branch2(x)

branch3_output = self.branch3(x)

branch4_output = self.branch4(x)

outputs = [branch1_output, branch2_output, branch3_output, branch4_output]

return torch.cat(outputs, 1)

class InceptionV1(nn.Module):

def __init__(self, num_classes=1000):

super(InceptionV1, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3)

self.maxpool1 = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.conv2 = nn.Conv2d(64, 64, kernel_size=1, stride=1, padding=1)

self.conv3 = nn.Conv2d(64, 192, kernel_size=3, stride=1, padding=1)

self.maxpool2 = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.inception3a = inception_block(192, 64, 96, 128, 16, 32, 32)

self.inception3b = inception_block(256, 128, 128, 192, 32, 96, 64)

self.maxpool3 = nn.MaxPool2d(3, stride=2)

self.inception4a = inception_block(480, 192, 96, 208, 16, 48, 64)

self.inception4b = inception_block(512, 160, 112, 224, 24, 64, 64)

self.inception4c = inception_block(512, 128, 128, 256, 24, 64, 64)

self.inception4d = inception_block(512, 112, 144, 288, 32, 64, 64)

self.inception4e = inception_block(528, 256, 160, 320, 32, 128, 128)

self.maxpool4 = nn.MaxPool2d(2, stride=2)

self.inception5a = inception_block(832, 256, 160, 320, 32, 128, 128)

self.inception5b=nn.Sequential(

inception_block(832, 384, 192, 384, 48, 128, 128),

nn.AvgPool2d(kernel_size=7,stride=1,padding=0),

nn.Dropout(0.4)

)

# 全连接网络层,用于分类

self.classifier = nn.Sequential(

nn.Linear(in_features=1024, out_features=1024),

nn.ReLU(),

nn.Linear(in_features=1024, out_features=num_classes),

nn.Softmax(dim=1)

)

def forward(self, x):

x = self.conv1(x)

x = F.relu(x)

x = self.maxpool1(x)

x = self.conv2(x)

x = F.relu(x)

x = self.conv3(x)

x = F.relu(x)

x = self.maxpool2(x)

x = self.inception3a(x)

x = self.inception3b(x)

x = self.maxpool3(x)

x = self.inception4a(x)

x = self.inception4b(x)

x = self.inception4c(x)

x = self.inception4d(x)

x = self.inception4e(x)

x = self.maxpool4(x)

x = self.inception5a(x)

x = self.inception5b(x)

x = torch.flatten(x, start_dim=1)

x = self.classifier(x)

return x;# 定义完成,测试一下

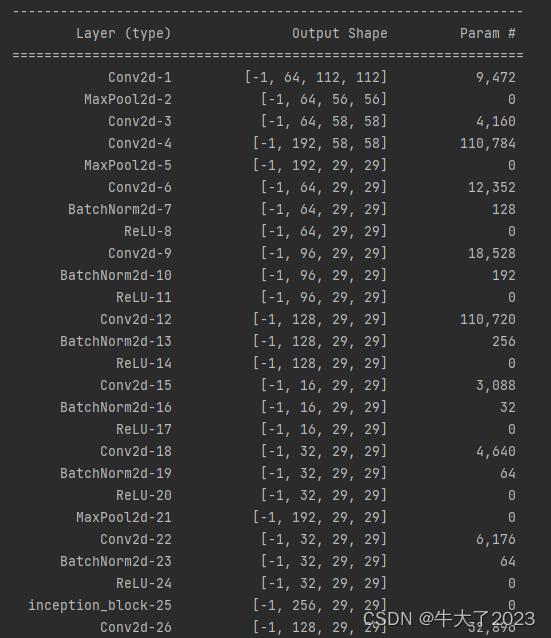

model = InceptionV1(4)

model.to(device)

# 统计模型参数量以及其他指标

import torchsummary as summary

summary.summary(model, (3, 224, 224))

3.训练运行

代码和以前的差不多,不再细说

# 训练循环

def train(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset) # 训练集的大小

num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)

train_loss, train_acc = 0, 0 # 初始化训练损失和正确率

for X, y in dataloader: # 获取图片及其标签

X, y = X.to(device), y.to(device)

# 计算预测误差

pred = model(X) # 网络输出

loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失

# 反向传播

optimizer.zero_grad() # grad属性归零

loss.backward() # 反向传播

optimizer.step() # 每一步自动更新

# 记录acc与loss

train_acc += (pred.argmax(1) == y).type(torch.float).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= num_batches

return train_acc, train_loss

def test(dataloader, model, loss_fn):

size = len(dataloader.dataset) # 测试集的大小

num_batches = len(dataloader) # 批次数目

test_loss, test_acc = 0, 0

# 当不进行训练时,停止梯度更新,节省计算内存消耗

with torch.no_grad():

for imgs, target in dataloader:

imgs, target = imgs.to(device), target.to(device)

# 计算loss

target_pred = model(imgs)

loss = loss_fn(target_pred, target)

test_loss += loss.item()

test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()

test_acc /= size

test_loss /= num_batches

return test_acc, test_loss跑十轮并保存模型

import copy

optimizer = torch.optim.Adam(model.parameters(), lr=1e-4)

loss_fn = nn.CrossEntropyLoss() # 创建损失函数

epochs = 10

train_loss = []

train_acc = []

test_loss = []

test_acc = []

best_acc = 0 # 设置一个最佳准确率,作为最佳模型的判别指标

for epoch in range(epochs):

# 更新学习率(使用自定义学习率时使用)

# adjust_learning_rate(optimizer, epoch, learn_rate)

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, optimizer)

# scheduler.step() # 更新学习率(调用官方动态学习率接口时使用)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)

# 保存最佳模型到 best_model

if epoch_test_acc > best_acc:

best_acc = epoch_test_acc

best_model = copy.deepcopy(model)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

# 获取当前的学习率

lr = optimizer.state_dict()['param_groups'][0]['lr']

template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f}, Lr:{:.2E}')

print(template.format(epoch + 1, epoch_train_acc * 100, epoch_train_loss,

epoch_test_acc * 100, epoch_test_loss, lr))

# 保存最佳模型到文件中

PATH = './best_model.pth' # 保存的参数文件名

torch.save(model.state_dict(), PATH)

print('Done')

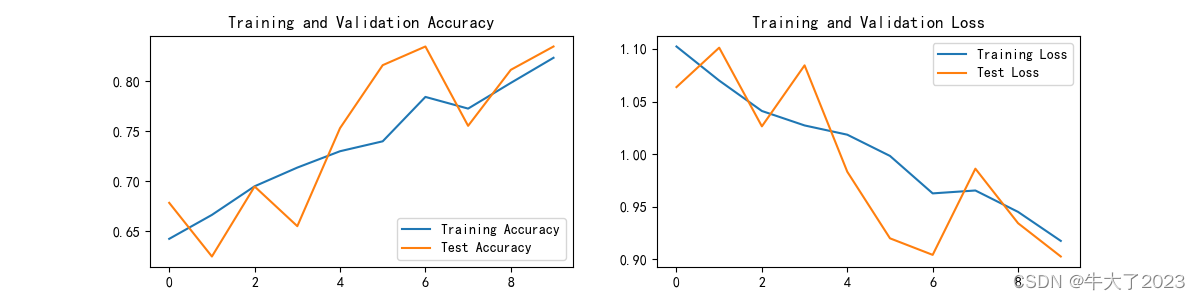

打印训练记录图

import matplotlib.pyplot as plt

# 隐藏警告

import warnings

warnings.filterwarnings("ignore") # 忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 # 分辨率

epochs_range = range(epochs)

plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

3.2指定图片进行预测

把训练部分注释掉

from PIL import Image

classes = list(total_data.class_to_idx)

def predict_one_image(image_path, model, transform, classes):

test_img = Image.open(image_path).convert('RGB')

plt.imshow(test_img) # 展示预测的图片

test_img = transform(test_img)

img = test_img.to(device).unsqueeze(0)

model.eval()

output = model(img)

_, pred = torch.max(output, 1)

pred_class = classes[pred]

print(f'预测结果是:{pred_class}')

# 预测训练集中的某张照片

predict_one_image(image_path='./data/Others/NM01_01_01.jpg',

model=model,

transform=train_transforms,

classes=classes)

三、总结

Inception v1是一种深度卷积神经网络,其结构特点是采用多个不同大小的卷积核对输入特征图进行卷积操作,并将各个卷积核的输出在深度维度进行拼接(concate)得到最终的输出。使用Inception v1的目的是为了能够在多个尺度下提取特征,并将这些特征合并起来以提高分类和检测的准确率和速度。

要用PyTorch复现Inception v1,可以首先定义Inception模块,包括四个分支,每个分支使用不同的卷积核进行卷积操作。然后,将四个分支的输出在深度维度上拼接起来得到最终输出。可以使用PyTorch中的nn.Module来实现Inception模块。接下来,将多个Inception模块按照一定的顺序进行组合,形成完整的Inception v1网络结构。可以使用PyTorch中的nn.Sequential或nn.ModuleList来实现网络的组合。最后,通过反向传播优化网络参数,以达到训练的目的。

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。...

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。... U8W/U8W-Mini使用与常见问题解决

U8W/U8W-Mini使用与常见问题解决 stm32使用HAL库配置串口中断收发数据(保姆级教程)

stm32使用HAL库配置串口中断收发数据(保姆级教程) 分享几个国内免费的ChatGPT镜像网址(亲测有效)

分享几个国内免费的ChatGPT镜像网址(亲测有效) Allegro16.6差分等长设置及走线总结

Allegro16.6差分等长设置及走线总结