您现在的位置是:首页 >其他 >vscode录音及语音实时转写插件开发并在工作区生成本地mp3文件 踩坑日记!网站首页其他

vscode录音及语音实时转写插件开发并在工作区生成本地mp3文件 踩坑日记!

前言

最近接到一个需求,实现录音功能并生成mp3文件到本地工作区,一开始考虑到的是在vscode主体代码里面开发,但这可不是一个小的工作量。时间紧,任务重!市面上实现录音功能的案例其实很多,一些功能代码是可以复用过来的,最后决定写一个插件去实现这个需求!但是插件页面是浏览器环境,想要生成mp3文件是不可能的!需要把语音数据传到node环境。

以目前的vscode版本来说,作者并没有开放访问本地媒体权限,所以插件市场里面的所有语音相关插件也并没有直接获取vscode的媒体权限。毕竟vscode是开源项目,有广大的插件市场,如果开放了所有权限,遇到了图谋不轨的人 ,想通过插件获取你的个人信息很容易,比如打开你的麦克风 打开你的摄像头 获取地理定位,在你不经意间可能就获取了你的个人信息,所以作者对权限做了限制。 这样如果想单纯通过写插件调用本地媒体设备的同学,可以放弃你的想法了。

对于一些二次开发的同学则很容易,在主体代码里面放开对应权限。

这里我们主要讲下 插件实现:

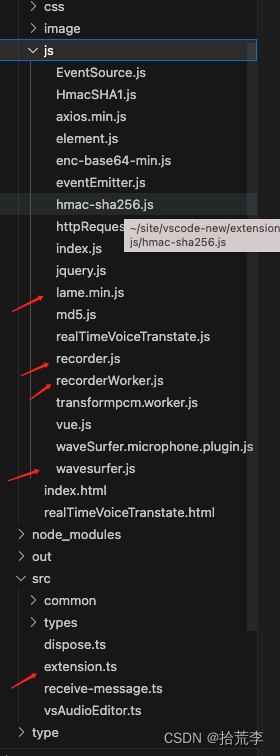

对应目录结构,主要文件

本地录音及生成mp3文件

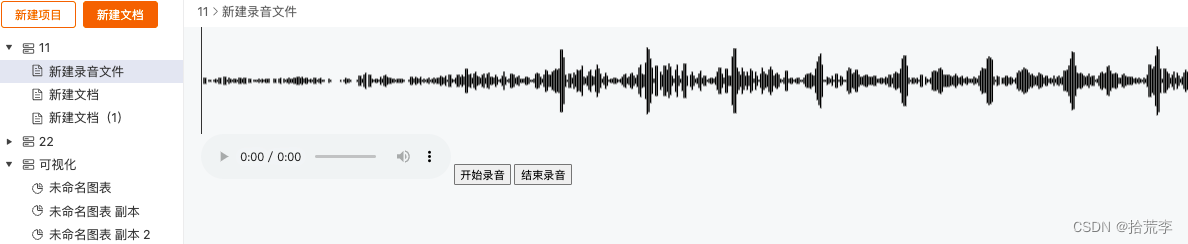

对应demo页面

wavesurfer.js ->实现 声纹图、声谱图、播放录音

lame.min.js -> mp3编码器

record.js的 -> 实现录音

这几个文件github有很多例子,大同小异,核心api都是一样的

初始化

我们再了解几个js关于语音的api

navigator.getUserMedia: 该对象可提供对相机和麦克风等媒体输入设备的连接访问,也包括屏幕共享。

AudioContext: 接口表示由链接在一起的音频模块构建的音频处理图,每个模块由一个AudioNode表示。音频上下文控制它包含的节点的创建和音频处理或解码的执行。在做任何其他操作之前,您需要创建一个AudioContext对象,因为所有事情都是在上下文中发生的。建议创建一个AudioContext对象并复用它,而不是每次初始化一个新的AudioContext对象,并且可以对多个不同的音频源和管道同时使用一个AudioContext对象。

createMediaStreamSource: 方法用于创建一个新的 MediaStreamAudioSourceNode 对象,需要传入一个媒体流对象 (MediaStream 对象)(可以从 navigator.getUserMedia 获得 MediaStream 对象实例), 然后来自 MediaStream 的音频就可以被播放和操作。

createScriptProcessor: 处理音频。

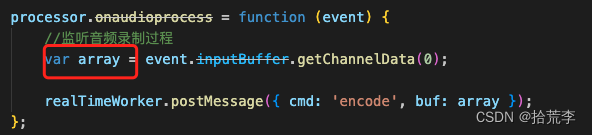

onaudioprocess: 监听音频录制过程,实时获取语音流

页面

mounted() {

this.wavesurfer = WaveSurfer.create({

container: '#waveform',

waveColor: 'black',

interact: false,

cursorWidth: 1,

barWidth: 1,

plugins: [

WaveSurfer.microphone.create()

]

});

this.wavesurfer.microphone.on('deviceReady', function (stream) {

console.log('Device ready!', stream);

});

this.wavesurfer.microphone.on('deviceError', function (code) {

console.warn('Device error: ' + code);

});

this.recorder = new Recorder({

sampleRate: 44100, //采样频率,默认为44100Hz(标准MP3采样率)

bitRate: 128, //比特率,默认为128kbps(标准MP3质量)

success: function() { //成功回调函数

console.log('success-->')

// start.disabled = false;

},

error: function(msg) { //失败回调函数

alert('msg-->', msg);

},

fix: function(msg) { //不支持H5录音回调函数

alert('msg--->', msg);

}

});

}

点击开始和结束录音

start() {

// start the microphone

this.wavesurfer.microphone.start();

// 开始录音

this.recorder.start();

},

end() {

// same as stopDevice() but also clears the wavesurfer canvas

this.wavesurfer.microphone.stop();

// 结束录音

this.recorder.stop();

let that = this;

this.recorder.getBlob(function(blob) {

that.audioPath = URL.createObjectURL(blob);

that.$refs.myAudio.load();

});

}

recorder.js

//初始化

init: function () {

navigator.getUserMedia = navigator.getUserMedia ||

navigator.webkitGetUserMedia ||

navigator.mozGetUserMedia ||

navigator.msGetUserMedia;

window.AudioContext = window.AudioContext ||

window.webkitAudioContext;

},

// 访问媒体设备

navigator.getUserMedia({

audio: true //配置对象

},

function (stream) { //成功回调

var context = new AudioContext(),

microphone = context.createMediaStreamSource(stream), //媒体流音频源

processor = context.createScriptProcessor(0, 1, 1), //js音频处理器

}

})

// 开始录音

_this.start = function () {

if (processor && microphone) {

microphone.connect(processor);

processor.connect(context.destination);

Util.log('开始录音');

}

};

//结束录音

_this.stop = function () {

if (processor && microphone) {

microphone.disconnect();

processor.disconnect();

Util.log('录音结束');

}

};

// new worker 开启后台线程,为数据编码,这里我部署到线上 是为了避免访问限制

fetch(

"https://lawdawn-download.oss-cn-beijing.aliyuncs.com/js/recorderWorker.js"

)

.then((response) => response.blob())

.then((blob) => {

const url = URL.createObjectURL(blob);

realTimeWorker = new Worker(url);

realTimeWorker.onmessage = async function (e) {

...

}

})

recorderWorker.js

// 后台线程接受到语音流数据之后做编码

(function(){

'use strict';

importScripts('https://lawdawn-download.oss-cn-beijing.aliyuncs.com/js/lame.min.js');

var mp3Encoder, maxSamples = 1152, samplesMono, lame, config, dataBuffer;

var clearBuffer = function(){

dataBuffer = [];

};

var appendToBuffer = function(mp3Buf){

dataBuffer.push(new Int8Array(mp3Buf));

};

var init = function(prefConfig){

config = prefConfig || {};

lame = new lamejs();

mp3Encoder = new lame.Mp3Encoder(1, config.sampleRate || 44100, config.bitRate || 128);

clearBuffer();

self.postMessage({

cmd: 'init'

});

};

var floatTo16BitPCM = function(input, output){

for(var i = 0; i < input.length; i++){

var s = Math.max(-1, Math.min(1, input[i]));

output[i] = (s < 0 ? s * 0x8000 : s * 0x7FFF);

}

};

var convertBuffer = function(arrayBuffer){

var data = new Float32Array(arrayBuffer);

var out = new Int16Array(arrayBuffer.length);

floatTo16BitPCM(data, out);

return out;

};

var encode = function(arrayBuffer){

samplesMono = convertBuffer(arrayBuffer);

var remaining = samplesMono.length;

for(var i = 0; remaining >= 0; i += maxSamples){

var left = samplesMono.subarray(i, i + maxSamples);

var mp3buf = mp3Encoder.encodeBuffer(left);

appendToBuffer(mp3buf);

remaining -= maxSamples;

}

};

var finish = function(){

appendToBuffer(mp3Encoder.flush());

self.postMessage({

cmd: 'end',

buf: dataBuffer

});

clearBuffer();

};

self.onmessage = function(e){

switch(e.data.cmd){

case 'init':

init(e.data.config);

break;

case 'encode':

encode(e.data.buf);

break;

case 'finish':

finish();

break;

}

};

})();

整个recorder.js

(function (exports) {

//公共方法

var Util = {

//初始化

init: function () {

navigator.getUserMedia = navigator.getUserMedia ||

navigator.webkitGetUserMedia ||

navigator.mozGetUserMedia ||

navigator.msGetUserMedia;

window.AudioContext = window.AudioContext ||

window.webkitAudioContext;

},

//日志

log: function () {

console.log.apply(console, arguments);

}

};

let realTimeWorker;

var Recorder = function (config) {

var _this = this;

config = config || {}; //初始化配置对象

config.sampleRate = config.sampleRate || 44100; //采样频率,默认为44100Hz(标准MP3采样率)

config.bitRate = config.bitRate || 128; //比特率,默认为128kbps(标准MP3质量)

Util.init();

if (navigator.getUserMedia) {

navigator.getUserMedia({

audio: true //配置对象

},

function (stream) { //成功回调

var context = new AudioContext(),

microphone = context.createMediaStreamSource(stream), //媒体流音频源

processor = context.createScriptProcessor(0, 1, 1), //js音频处理器

successCallback, errorCallback;

config.sampleRate = context.sampleRate;

processor.onaudioprocess = function (event) {

//监听音频录制过程

var array = event.inputBuffer.getChannelData(0);

realTimeWorker.postMessage({ cmd: 'encode', buf: array });

};

fetch(

"https://lawdawn-download.oss-cn-beijing.aliyuncs.com/js/recorderWorker.js"

)

.then((response) => response.blob())

.then((blob) => {

const url = URL.createObjectURL(blob);

realTimeWorker = new Worker(url);

realTimeWorker.onmessage = async function (e) { //主线程监听后台线程,实时通信

switch (e.data.cmd) {

case 'init':

Util.log('初始化成功');

if (config.success) {

config.success();

}

break;

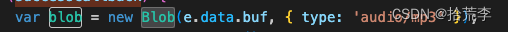

case 'end':

if (successCallback) {

var blob = new Blob(e.data.buf, { type: 'audio/mp3' });

let formData = new FormData();

formData.append('file', blob, 'main.mp3');

fetch("http://127.0.0.1:8840/microm", {

method: 'POST',

body: formData

})

successCallback(blob);

Util.log('MP3大小:' + blob.size + '%cB', 'color:#0000EE');

}

break;

case 'error':

Util.log('错误信息:' + e.data.error);

if (errorCallback) {

errorCallback(e.data.error);

}

break;

default:

Util.log('未知信息:' + e.data);

}

};

_this.start = function () {

if (processor && microphone) {

microphone.connect(processor);

processor.connect(context.destination);

Util.log('开始录音');

}

};

//结束录音

_this.stop = function () {

if (processor && microphone) {

microphone.disconnect();

processor.disconnect();

Util.log('录音结束');

}

};

//获取blob格式录音文件

_this.getBlob = function (onSuccess, onError) {

successCallback = onSuccess;

errorCallback = onError;

realTimeWorker.postMessage({ cmd: 'finish' });

};

realTimeWorker.postMessage({

cmd: 'init',

config: {

sampleRate: config.sampleRate,

bitRate: config.bitRate

}

});

});

// var realTimeWorker = new Worker('js/recorderWorker.js'); //开启后台线程

//接口列表

//开始录音

},

function (error) { //失败回调

var msg;

switch (error.code || error.name) {

case 'PermissionDeniedError':

case 'PERMISSION_DENIED':

case 'NotAllowedError':

msg = '用户拒绝访问麦克风';

break;

case 'NOT_SUPPORTED_ERROR':

case 'NotSupportedError':

msg = '浏览器不支持麦克风';

break;

case 'MANDATORY_UNSATISFIED_ERROR':

case 'MandatoryUnsatisfiedError':

msg = '找不到麦克风设备';

break;

default:

msg = '无法打开麦克风,异常信息:' + (error.code || error.name);

break;

}

Util.log(msg);

if (config.error) {

config.error(msg);

}

});

} else {

Util.log('当前浏览器不支持录音功能');

if (config.fix) {

config.fix('当前浏览器不支持录音功能');

}

}

};

//模块接口

exports.Recorder = Recorder;

})(window);

踩坑!

前面录音都进行的很顺利,录音在渲染进程里都可以正常播放,接下来就是生成本地mp3文件的处理了。

第一次尝试:浏览器端获取的Blob数据,通过vscode.postMessage传送过去之后获取的竟是{}空对象。

肯能原因 数据传输过程做了序列化,导致丢失,或者Blob只是浏览器API,node无法获取

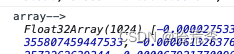

第二次尝试:将实时获取的语音数据流传递过去

数据 是Float32Array格式数据,但是通过序列化传递过去之后,发现数据无法恢复原来的样子,还是失败!

经过苦想后接下来,用了一个方法

第三次尝试:

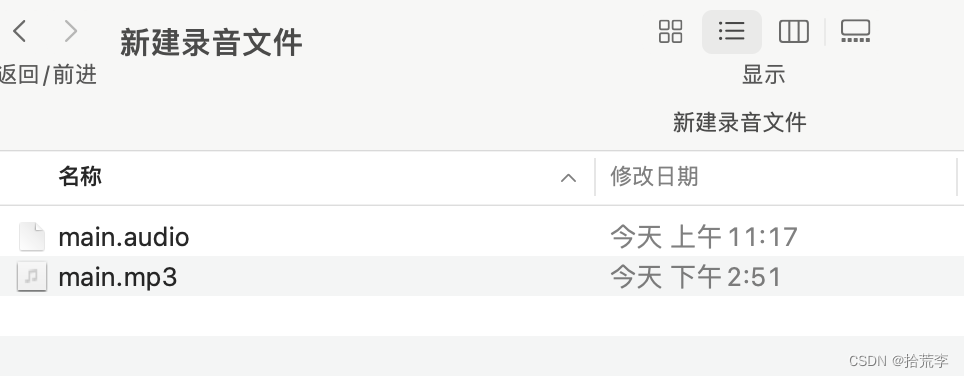

在extension.ts里面开启了一个本地服务

export async function activate(context: vscode.ExtensionContext) {

(global as any).audioWebview = null;

context.subscriptions.push(VsAudioEditorProvider.register(context));

const server = http.createServer((req, res) => {

res.setHeader('Access-Control-Allow-Origin', '*');

res.setHeader('Access-Control-Allow-Methods', 'GET, POST, OPTIONS, PUT, PATCH, DELETE');

res.setHeader('Access-Control-Allow-Headers', 'X-Requested-With,content-type');

res.setHeader('Access-Control-Allow-Credentials', 'true');

if (req.method === 'POST' && req.url === '/microm') {

const stream = fs.createWriteStream(path.join(path.dirname((global as any).documentUri!.fsPath), 'main.mp3'));

req.on("data", (chunck) => {

stream.write(chunck);

});

req.on("end", () => {

vscode.window.showInformationMessage('语音文件生成成功!');

res.writeHead(200);

res.end('success!');

});

}

}).listen(8840);

}

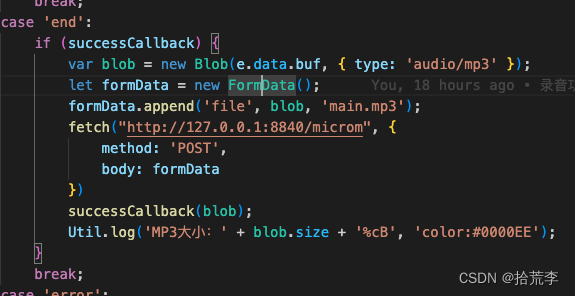

在浏览器端录音结束后,调用了接口把数据传送了过来

经过在本地和生产环境测试都没有问题~ 大工告成!

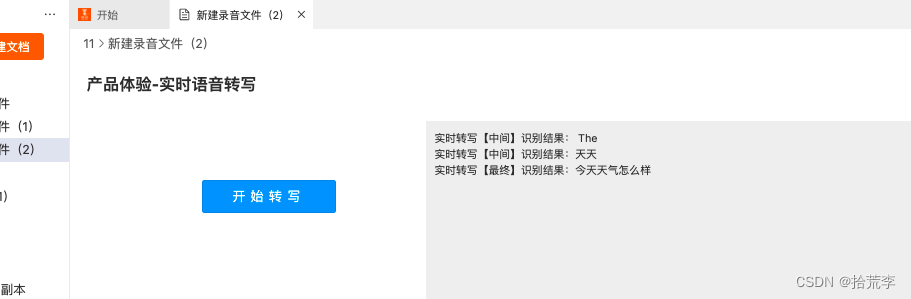

语音实时转写功能

语音实时转写调用的科大讯飞的接口,科大讯飞给出的demo页面

js

/**

* Created by lcw on 2023/5/20.

*

* 实时语音转写 WebAPI 接口调用示例 接口文档(必看):https://www.xfyun.cn/doc/asr/rtasr/API.html

* 错误码链接:

* https://www.xfyun.cn/doc/asr/rtasr/API.html

* https://www.xfyun.cn/document/error-code (code返回错误码时必看)

*

*/

if (typeof (Worker) == undefined) {

// 不支持 Web Workers

alert('不支持 Web Workers');

} else {

// 支持 Web Workers

console.log('支持 Web Workers');

}

let recorderWorker

fetch(

"https://lawdawn-download.oss-cn-beijing.aliyuncs.com/js/transformpcm.worker.js"

)

.then((response) => response.blob())

.then((blob) => {

const url = URL.createObjectURL(blob);

recorderWorker = new Worker(url);

recorderWorker.onmessage = function (e) {

buffer.push(...e.data.buffer)

}

});

// 音频转码worker

// let recorderWorker = new Worker('transformpcm.worker.js');

// 记录处理的缓存音频

let buffer = []

let AudioContext = window.AudioContext || window.webkitAudioContext

navigator.getUserMedia = navigator.getUserMedia || navigator.webkitGetUserMedia || navigator.mozGetUserMedia || navigator.msGetUserMedia

class IatRecorder {

constructor(config) {

this.config = config

this.state = 'ing'

//以下信息在控制台-我的应用-实时语音转写 页面获取

this.appId = 'xxxxx'

this.apiKey = 'xxxxx'

}

start() {

this.stop()

if (navigator.getUserMedia && AudioContext) {

this.state = 'ing'

if (!this.recorder) {

var context = new AudioContext()

this.context = context

this.recorder = context.createScriptProcessor(0, 1, 1)

var getMediaSuccess = (stream) => {

var mediaStream = this.context.createMediaStreamSource(stream)

this.mediaStream = mediaStream

this.recorder.onaudioprocess = (e) => {

this.sendData(e.inputBuffer.getChannelData(0))

}

this.connectWebsocket()

}

var getMediaFail = (e) => {

this.recorder = null

this.mediaStream = null

this.context = null

console.log('请求麦克风失败')

}

if (navigator.mediaDevices && navigator.mediaDevices.getUserMedia) {

navigator.mediaDevices.getUserMedia({

audio: true,

video: false

}).then((stream) => {

getMediaSuccess(stream)

}).catch((e) => {

getMediaFail(e)

})

} else {

navigator.getUserMedia({

audio: true,

video: false

}, (stream) => {

getMediaSuccess(stream)

}, function (e) {

getMediaFail(e)

})

}

} else {

this.connectWebsocket()

}

}

}

stop() {

this.state = 'end'

try {

this.mediaStream.disconnect(this.recorder)

this.recorder.disconnect()

} catch (e) { }

}

sendData(buffer) {

recorderWorker.postMessage({

command: 'transform',

buffer: buffer

})

}

// 生成握手参数

getHandShakeParams() {

var appId = this.appId

var secretKey = this.apiKey

var ts = Math.floor(new Date().getTime() / 1000);//new Date().getTime()/1000+'';

var signa = hex_md5(appId + ts)//hex_md5(encodeURIComponent(appId + ts));//EncryptUtil.HmacSHA1Encrypt(EncryptUtil.MD5(appId + ts), secretKey);

var signatureSha = CryptoJSNew.HmacSHA1(signa, secretKey)

var signature = CryptoJS.enc.Base64.stringify(signatureSha)

signature = encodeURIComponent(signature)

return "?appid=" + appId + "&ts=" + ts + "&signa=" + signature;

}

connectWebsocket() {

// var url = 'wss://rtasr.xfyun.cn/v1/ws'

var url = 'ws://xxxx.xxx.xx/xf-rtasr';

var urlParam = this.getHandShakeParams()

// url = `${url}${urlParam}`

if ('WebSocket' in window) {

this.ws = new WebSocket(url)

} else if ('MozWebSocket' in window) {

this.ws = new MozWebSocket(url)

} else {

alert(notSupportTip)

return null

}

this.ws.onopen = (e) => {

if (e.isTrusted) {

this.mediaStream.connect(this.recorder)

this.recorder.connect(this.context.destination)

setTimeout(() => {

this.wsOpened(e)

}, 500)

this.config.onStart && this.config.onStart(e)

} else {

alert('出现错误');

}

}

this.ws.onmessage = (e) => {

// this.config.onMessage && this.config.onMessage(e)

this.wsOnMessage(e)

}

this.ws.onerror = (e) => {

console.log('err-->', e)

this.stop()

console.log("关闭连接ws.onerror");

this.config.onError && this.config.onError(e)

}

this.ws.onclose = (e) => {

this.stop()

console.log("关闭连接ws.onclose");

$('.start-button').attr('disabled', false);

this.config.onClose && this.config.onClose(e)

}

}

wsOpened() {

if (this.ws.readyState !== 1) {

return

}

var audioData = buffer.splice(0, 1280)

this.ws.send(new Int8Array(audioData))

this.handlerInterval = setInterval(() => {

// websocket未连接

if (this.ws.readyState !== 1) {

clearInterval(this.handlerInterval)

return

}

if (buffer.length === 0) {

if (this.state === 'end') {

this.ws.send("{"end": true}")

console.log("发送结束标识");

clearInterval(this.handlerInterval)

}

return false

}

var audioData = buffer.splice(0, 1280)

if (audioData.length > 0) {

this.ws.send(new Int8Array(audioData))

}

}, 40)

}

wsOnMessage(e) {

let jsonData = JSON.parse(e.data)

// if (jsonData.action == "started") {

// // 握手成功

// console.log("握手成功");

// } else if (jsonData.action == "result") {

// 转写结果

if (this.config.onMessage && typeof this.config.onMessage == 'function') {

this.config.onMessage(jsonData)

}

// } else if (jsonData.action == "error") {

// // 连接发生错误

// console.log("出错了:", jsonData);

// }

}

ArrayBufferToBase64(buffer) {

var binary = ''

var bytes = new Uint8Array(buffer)

var len = bytes.byteLength

for (var i = 0; i < len; i++) {

binary += String.fromCharCode(bytes[i])

}

return window.btoa(binary)

}

}

class IatTaste {

constructor() {

var iatRecorder = new IatRecorder({

onClose: () => {

this.stop()

this.reset()

},

onError: (data) => {

this.stop()

this.reset()

alert('WebSocket连接失败')

},

onMessage: (message) => {

// this.setResult(JSON.parse(message))

this.setResult(message)

},

onStart: () => {

$('hr').addClass('hr')

var dialect = $('.dialect-select').find('option:selected').text()

$('.taste-content').css('display', 'none')

$('.start-taste').addClass('flex-display-1')

$('.dialect-select').css('display', 'none')

$('.start-button').text('结束转写')

$('.time-box').addClass('flex-display-1')

$('.dialect').text(dialect).css('display', 'inline-block')

this.counterDown($('.used-time'))

}

})

this.iatRecorder = iatRecorder

this.counterDownDOM = $('.used-time')

this.counterDownTime = 0

this.text = {

start: '开始转写',

stop: '结束转写'

}

this.resultText = ''

}

start() {

this.iatRecorder.start()

}

stop() {

$('hr').removeClass('hr')

this.iatRecorder.stop()

}

reset() {

this.counterDownTime = 0

clearTimeout(this.counterDownTimeout)

buffer = []

$('.time-box').removeClass('flex-display-1').css('display', 'none')

$('.start-button').text(this.text.start)

$('.dialect').css('display', 'none')

$('.dialect-select').css('display', 'inline-block')

$('.taste-button').css('background', '#0b99ff')

}

init() {

let self = this

//开始

$('#taste_button').click(function () {

if (navigator.getUserMedia && AudioContext && recorderWorker) {

self.start()

} else {

alert(notSupportTip)

}

})

//结束

$('.start-button').click(function () {

if ($(this).text() === self.text.start && !$(this).prop('disabled')) {

$('#result_output').text('')

self.resultText = ''

self.start()

//console.log("按钮非禁用状态,正常启动" + $(this).prop('disabled'))

} else {

//$('.taste-content').css('display', 'none')

$('.start-button').attr('disabled', true);

self.stop()

//reset

this.counterDownTime = 0

clearTimeout(this.counterDownTimeout)

buffer = []

$('.time-box').removeClass('flex-display-1').css('display', 'none')

$('.start-button').text('转写停止中...')

$('.dialect').css('display', 'none')

$('.taste-button').css('background', '#8E8E8E')

$('.dialect-select').css('display', 'inline-block')

//console.log("按钮非禁用状态,正常停止" + $(this).prop('disabled'))

}

})

}

setResult(data) {

let rtasrResult = []

var currentText = $('#result_output').html()

rtasrResult[data.seg_id] = data

rtasrResult.forEach(i => {

let str = "实时转写"

str += (i.cn.st.type == 0) ? "【最终】识别结果:" : "【中间】识别结果:"

i.cn.st.rt.forEach(j => {

j.ws.forEach(k => {

k.cw.forEach(l => {

str += l.w

})

})

})

if (currentText.length == 0) {

$('#result_output').html(str)

} else {

$('#result_output').html(currentText + "<br>" + str)

}

var ele = document.getElementById('result_output');

ele.scrollTop = ele.scrollHeight;

})

}

counterDown() {

/*//计时5分钟

if (this.counterDownTime === 300) {

this.counterDownDOM.text('05: 00')

this.stop()

} else if (this.counterDownTime > 300) {

this.reset()

return false

} else */

if (this.counterDownTime >= 0 && this.counterDownTime < 10) {

this.counterDownDOM.text('00: 0' + this.counterDownTime)

} else if (this.counterDownTime >= 10 && this.counterDownTime < 60) {

this.counterDownDOM.text('00: ' + this.counterDownTime)

} else if (this.counterDownTime % 60 >= 0 && this.counterDownTime % 60 < 10) {

this.counterDownDOM.text('0' + parseInt(this.counterDownTime / 60) + ': 0' + this.counterDownTime % 60)

} else {

this.counterDownDOM.text('0' + parseInt(this.counterDownTime / 60) + ': ' + this.counterDownTime % 60)

}

this.counterDownTime++

this.counterDownTimeout = setTimeout(() => {

this.counterDown()

}, 1000)

}

}

var iatTaste = new IatTaste()

iatTaste.init()

U8W/U8W-Mini使用与常见问题解决

U8W/U8W-Mini使用与常见问题解决 QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。...

QT多线程的5种用法,通过使用线程解决UI主界面的耗时操作代码,防止界面卡死。... stm32使用HAL库配置串口中断收发数据(保姆级教程)

stm32使用HAL库配置串口中断收发数据(保姆级教程) 分享几个国内免费的ChatGPT镜像网址(亲测有效)

分享几个国内免费的ChatGPT镜像网址(亲测有效) Allegro16.6差分等长设置及走线总结

Allegro16.6差分等长设置及走线总结